|

| |||||||||||||||||||||||||||||||||||||||||||||||||||

| ||||||||||||||||||||||||||||||||||||||||||||||||||||

|

Security '03 Paper

[Security '03 Technical Program]

Remote Timing Attacks are PracticalStanford University dbrumley@cs.stanford.edu

Dan Boneh

AbstractTiming attacks are usually used to attack weak computing devices such as smartcards. We show that timing attacks apply to general software systems. Specifically, we devise a timing attack against OpenSSL. Our experiments show that we can extract private keys from an OpenSSL-based web server running on a machine in the local network. Our results demonstrate that timing attacks against network servers are practical and therefore security systems should defend against them.

1 IntroductionTiming attacks enable an attacker to extract secrets maintained in a security system by observing the time it takes the system to respond to various queries. For example, Kocher [10] designed a timing attack to expose secret keys used for RSA decryption. Until now, these attacks were only applied in the context of hardware security tokens such as smartcards [4,10,18]. It is generally believed that timing attacks cannot be used to attack general purpose servers, such as web servers, since decryption times are masked by many concurrent processes running on the system. It is also believed that common implementations of RSA (using Chinese Remainder and Montgomery reductions) are not vulnerable to timing attacks. We challenge both assumptions by developing a remote timing attack against OpenSSL [15], an SSL library commonly used in web servers and other SSL applications. Our attack client measures the time an OpenSSL server takes to respond to decryption queries. The client is able to extract the private key stored on the server. The attack applies in several environments.

Many crypto libraries completely ignore the timing attack and have no defenses implemented to prevent it. For example, libgcrypt [14] (used in GNUTLS and GPG) and Cryptlib [5] do not defend against timing attacks. OpenSSL 0.9.7 implements a defense against the timing attack as an option. However, common applications such as mod_SSL, the Apache SSL module, do not enable this option and are therefore vulnerable to the attack. These examples show that timing attacks are a largely ignored vulnerability in many crypto implementations. We hope the results of this paper will help convince developers to implement proper defenses (see Section 6). Interestingly, Mozilla's NSS crypto library properly defends against the timing attack. We note that most crypto acceleration cards also implement defenses against the timing attack. Consequently, network servers using these accelerator cards are not vulnerable. We chose to tailor our timing attack to OpenSSL since it is the most widely used open source SSL library. The OpenSSL implementation of RSA is highly optimized using Chinese Remainder, Sliding Windows, Montgomery multiplication, and Karatsuba's algorithm. These optimizations cause both known timing attacks on RSA [10,18] to fail in practice. Consequently, we had to devise a new timing attack based on [18,19,20,21,22] that is able to extract the private key from an OpenSSL-based server. As we will see, the performance of our attack varies with the exact environment in which it is applied. Even the exact compiler optimizations used to compile OpenSSL can make a big difference. In Sections 2 and 3 we describe OpenSSL's implementation of RSA and the timing attack on OpenSSL. In Section 4 we discuss how these attacks apply to SSL. In Section 5 we describe the actual experiments we carried out. We show that using about a million queries we can remotely extract a 1024-bit RSA private key from an OpenSSL 0.9.7 server. The attack takes about two hours. Timing attacks are related to a class of attacks called side-channel attacks. These include power analysis [9] and attacks based on electromagnetic radiation [16]. Unlike the timing attack, these extended side channel attacks require special equipment and physical access to the machine. In this paper we only focus on the timing attack. We also note that our attack targets the implementation of RSA decryption in OpenSSL. Our timing attack does not depend upon the RSA padding used in SSL and TLS.

|

|

RSA operations, including those using Montgomery's method, must make

use of a multi-precision integer multiplication routine. OpenSSL

implements two multiplication routines: Karatsuba (sometimes called

recursive) and ``normal''. Multi-precision libraries represent large

integers as a sequence of words. OpenSSL uses Karatsuba

multiplication when multiplying two

numbers with an equal number of words. Karatsuba multiplication

takes time

![]() which is

which is ![]() .

OpenSSL uses normal multiplication, which runs in time

.

OpenSSL uses normal multiplication, which runs in time ![]() , when

multiplying two numbers with an unequal number of words of size

, when

multiplying two numbers with an unequal number of words of size ![]() and

and ![]() . Hence, for numbers that are approximately the same size (i.e.

. Hence, for numbers that are approximately the same size (i.e.

![]() is close to

is close to ![]() ) normal multiplication takes quadratic time.

) normal multiplication takes quadratic time.

Thus, OpenSSL's integer multiplication routine leaks important timing information. Since Karatsuba is typically faster, multiplication of two unequal size words takes longer than multiplication of two equal size words. Time measurements will reveal how frequently the operands given to the multiplication routine have the same length. We use this fact in the timing attack on OpenSSL.

In both algorithms, multiplication is ultimately done on individual

words. The underlying word multiplication algorithm dominates the

total time for a decryption. For example, in OpenSSL the underlying

word multiplication routine typically takes ![]() of the total

runtime. The time to multiply individual words depends on the number

of bits per word. As we will see in experiment 3 the

exact architecture on which OpenSSL runs has an impact on timing

measurements used for the attack. In our experiments the word size

was 32 bits.

of the total

runtime. The time to multiply individual words depends on the number

of bits per word. As we will see in experiment 3 the

exact architecture on which OpenSSL runs has an impact on timing

measurements used for the attack. In our experiments the word size

was 32 bits.

So far we identified two algorithmic data dependencies in OpenSSL that cause time variance in RSA decryption: (1) Schindler's observation on the number of extra reductions in a Montgomery reduction, and (2) the timing difference due to the choice of multiplication routine, i.e. Karatsuba vs. normal. Unfortunately, the effects of these optimizations counteract one another.

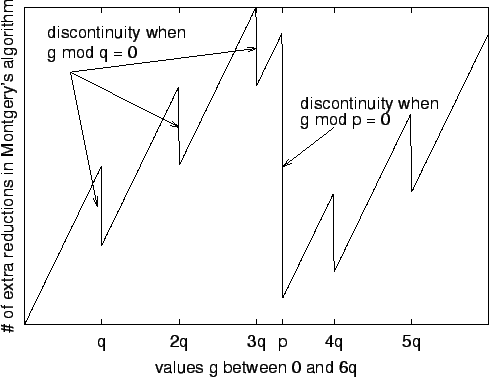

Consider a timing attack where we decrypt a ciphertext ![]() . As

. As ![]() approaches a multiple of the factor

approaches a multiple of the factor ![]() from below,

equation (1) tells us that the number of extra

reductions in a Montgomery reduction increases. When we are just over

a multiple of

from below,

equation (1) tells us that the number of extra

reductions in a Montgomery reduction increases. When we are just over

a multiple of ![]() , the number of extra reductions decreases

dramatically. In other words, decryption of

, the number of extra reductions decreases

dramatically. In other words, decryption of ![]() should

be slower than decryption of

should

be slower than decryption of ![]() .

.

The choice of Karatsuba vs. normal multiplication has the opposite

effect. When ![]() is just below a multiple of

is just below a multiple of ![]() , then OpenSSL almost

always uses fast Karatsuba multiplication. When

, then OpenSSL almost

always uses fast Karatsuba multiplication. When ![]() is just over a

multiple of

is just over a

multiple of ![]() then

then ![]() is small and consequently most

multiplications will be of integers with different lengths.

In this case, OpenSSL uses normal multiplication which is slower. In

other words, decryption of

is small and consequently most

multiplications will be of integers with different lengths.

In this case, OpenSSL uses normal multiplication which is slower. In

other words, decryption of ![]() should be faster than

decryption of

should be faster than

decryption of ![]() -- the exact opposite of the

effect of extra reductions in Montgomery's algorithm. Which effect

dominates is determined by the exact environment. Our attack uses

both effects, but each effect is dominant at a different phase of the

attack.

-- the exact opposite of the

effect of extra reductions in Montgomery's algorithm. Which effect

dominates is determined by the exact environment. Our attack uses

both effects, but each effect is dominant at a different phase of the

attack.

Our attack exposes the factorization of the RSA modulus. Let ![]() with

with ![]() . We build approximations to

. We build approximations to ![]() that get progressively

closer as the attack proceeds. We call these approximations guesses.

We refine our guess by learning bits of

that get progressively

closer as the attack proceeds. We call these approximations guesses.

We refine our guess by learning bits of ![]() one at a time, from most

significant to least. Thus, our attack can be viewed as a binary

search for

one at a time, from most

significant to least. Thus, our attack can be viewed as a binary

search for ![]() . After recovering the half-most significant bits of

. After recovering the half-most significant bits of

![]() , we can use Coppersmith's algorithm [3] to

retrieve the complete factorization.

, we can use Coppersmith's algorithm [3] to

retrieve the complete factorization.

Initially our guess ![]() of

of ![]() lies between

lies between ![]() (i.e.

(i.e.

![]() ) and

) and ![]() (i.e.

(i.e.

![]() ). We then time the

decryption of all possible combinations of the top few bits (typically

2-3). When plotted, the decryption times will show two peaks: one for

). We then time the

decryption of all possible combinations of the top few bits (typically

2-3). When plotted, the decryption times will show two peaks: one for

![]() and one for

and one for ![]() . We pick the values that bound the first peak,

which in OpenSSL will always be

. We pick the values that bound the first peak,

which in OpenSSL will always be ![]() .

.

Suppose we already recovered the top ![]() bits of

bits of ![]() . Let

. Let ![]() be an

integer that has the same top

be an

integer that has the same top ![]() bits as

bits as ![]() and the remaining bits

of

and the remaining bits

of ![]() are 0. Then

are 0. Then ![]() . At a high level, we recover the

. At a high level, we recover the ![]() 'th bit

of

'th bit

of ![]() as follows:

as follows:

When the ![]() 'th bit is 0, the ``large'' difference can either be

negative or positive. In this case, if

'th bit is 0, the ``large'' difference can either be

negative or positive. In this case, if ![]() is positive then

DecryptTime(

is positive then

DecryptTime(![]() )

) ![]() DecryptTime(

DecryptTime(![]() ), and the Montgomery

reductions dominated the time difference. If

), and the Montgomery

reductions dominated the time difference. If ![]() is negative,

then DecryptTime(

is negative,

then DecryptTime(![]() )

) ![]() DecryptTime(

DecryptTime(![]() ), and the

multi-precision multiplication dominated the time difference.

), and the

multi-precision multiplication dominated the time difference.

Formatting of RSA plaintext, e.g. PKCS 1, does not affect this timing attack. We also do not need the value of the decryption, only how long the decryption takes.

We would like

![]() when

when ![]() and

and ![]() . Time measurements that have this

property we call a strong indicator for bits of

. Time measurements that have this

property we call a strong indicator for bits of ![]() , and those that do

not are a weak indicator for bits of

, and those that do

not are a weak indicator for bits of ![]() . Square and multiply

exponentiation results in a strong indicator because there are

approximately

. Square and multiply

exponentiation results in a strong indicator because there are

approximately

![]() multiplications by

multiplications by ![]() during

decryption. However, in sliding windows with window size

during

decryption. However, in sliding windows with window size ![]() (

(![]() in OpenSSL) the expected number of multiplications by

in OpenSSL) the expected number of multiplications by ![]() is only:

is only:

To overcome this we query at a neighborhood of values

![]() , and use the result as the decrypt time for

, and use the result as the decrypt time for ![]() (and

similarly for

(and

similarly for ![]() ). The total decryption time for

). The total decryption time for ![]() or

or

![]() is then:

is then:

We define ![]() as the time to compute

as the time to compute ![]() with sliding windows when

considering a neighborhood of values. As

with sliding windows when

considering a neighborhood of values. As ![]() grows,

grows,

![]() typically becomes a stronger indicator for a bit of

typically becomes a stronger indicator for a bit of ![]() (at the cost of additional decryption queries).

(at the cost of additional decryption queries).

As mentioned in the introduction there are a number of scenarios where the timing attack applies to networked servers. We discuss an attack on SSL applications, such as stunnel [23] and an Apache web server with mod_SSL [12], and an attack on trusted computing projects such as Microsoft's NGSCB (formerly Palladium).

During a standard full SSL handshake the SSL server performs an RSA decryption using its private key. The SSL server decryption takes place after receiving the CLIENT-KEY-EXCHANGE message from the SSL client. The CLIENT-KEY-EXCHANGE message is composed on the client by encrypting a PKCS 1 padded random bytes with the server's public key. The randomness encrypted by the client is used by the client and server to compute a shared master secret for end-to-end encryption.

Upon receiving a CLIENT-KEY-EXCHANGE message from the client, the server first decrypts the message with its private key and then checks the resulting plaintext for proper PKCS 1 formatting. If the decrypted message is properly formatted, the client and server can compute a shared master secret. If the decrypted message is not properly formatted, the server generates its own random bytes for computing a master secret and continues the SSL protocol. Note that an improperly formatted CLIENT-KEY-EXCHANGE message prevents the client and server from computing the same master secret, ultimately leading the server to send an ALERT message to the client indicating the SSL handshake has failed.

In our attack, the client substitutes a properly formatted CLIENT-KEY-EXCHANGE message with our guess ![]() . The server decrypts

. The server decrypts

![]() as a normal CLIENT-KEY-EXCHANGE message, and then checks the

resulting plaintext for proper PKCS 1 padding. Since the decryption of

as a normal CLIENT-KEY-EXCHANGE message, and then checks the

resulting plaintext for proper PKCS 1 padding. Since the decryption of

![]() will not be properly formatted, the server and client will not

compute the same master secret, and the client will ultimately receive

an ALERT message from the server. The attacking client computes

the time difference from sending

will not be properly formatted, the server and client will not

compute the same master secret, and the client will ultimately receive

an ALERT message from the server. The attacking client computes

the time difference from sending ![]() as the CLIENT-KEY-EXCHANGE

message to receiving the response message from the server as the

time to decrypt

as the CLIENT-KEY-EXCHANGE

message to receiving the response message from the server as the

time to decrypt ![]() . The client repeats this process for each value

of of

. The client repeats this process for each value

of of ![]() and

and ![]() needed to calculate

needed to calculate ![]() and

and ![]() .

.

Our experiments are also relevant to trusted computing efforts such as NGSCB. One goal of NGSCB is to provide sealed storage. Sealed storage allows an application to encrypt data to disk using keys unavailable to the user. The timing attack shows that by asking NGSCB to decrypt data in sealed storage a user may learn the secret application key. Therefore, it is essential that the secure storage mechanism provided by projects such as NGSCB defend against this timing attack. As mentioned in the introduction, RSA applications (and subsequently SSL applications using RSA for key exchange) using a hardware crypto accelerator are not vulnerable since most crypto accelerators implement defenses against the timing attack. Our attack applies to software based RSA implementations that do not defend against timing attacks as discussed in section 6.

We performed a series of experiments to demonstrate the effectiveness

of our attack on OpenSSL. In each case we show the factorization of

the RSA modulus ![]() is vulnerable. We show that a number of factors

affect the efficiency of our timing attack.

is vulnerable. We show that a number of factors

affect the efficiency of our timing attack.

Our experiments consisted of:

The first four experiments were carried out inter-process via TCP, and directly characterize the vulnerability of OpenSSL's RSA decryption routine. The fifth experiment demonstrates our attack succeeds on the local network. The last experiment demonstrates our attack succeeds on the local network against common SSL-enabled applications.

Our attack was performed against OpenSSL 0.9.7, which does not blind RSA operations by default. All tests were run under RedHat Linux 7.3 on a 2.4 GHz Pentium 4 processor with 1 GB of RAM, using gcc 2.96 (RedHat). All keys were generated at random via OpenSSL's key generation routine.

For the first 5 experiments we implemented a simple TCP server that read an ASCII string, converted the string to OpenSSL's internal multi-precision representation, then performed the RSA decryption. The server returned 0 to signify the end of decryption. The TCP client measured the time from writing the ciphertext over the socket to receiving the reply.

Our timing attack requires a clock with fine resolution. We use the Pentium cycle counter on the attacking machine as such a clock, giving us a time resolution of 2.4 billion ticks per second. The cycle counter increments once per clock tick, regardless of the actual instruction issued. Thus, the decryption time is the cycle counter difference between sending the ciphertext to receiving the reply. The cycle counter is accessible via the ``rdtsc'' instruction, which returns the 64-bit cycle count since CPU initialization. The high 32 bits are returned into the EDX register, and the low 32 bits into the EAX register. As recommended in [7], we use the ``cpuid'' instruction to serialize the processor to prevent out-of-order execution from changing our timing measurements. Note that cpuid and rdtsc are only used by the attacking client, and that neither instruction is a privileged operation. Other architectures have a similar a counter, such as the UltraSparc %tick register.

OpenSSL generates RSA moduli ![]() where

where ![]() . In each case

we target the smaller factor,

. In each case

we target the smaller factor, ![]() . Once

. Once ![]() is known, the RSA modulus

is factored and, consequently, the server's private key is exposed.

is known, the RSA modulus

is factored and, consequently, the server's private key is exposed.

|

|

This experiment explores the parameters that determine the number of

queries needed to expose a single bit of an RSA factor. For any

particular bit of ![]() , the number of queries for guess

, the number of queries for guess ![]() is

determined by two parameters: neighborhood size and sample size.

is

determined by two parameters: neighborhood size and sample size.

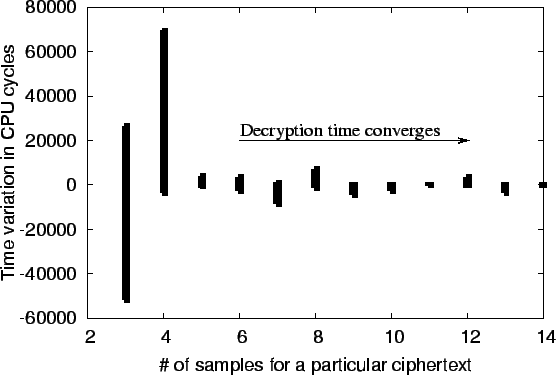

To overcome the effects of a multi-user environment, we repeatedly

sample ![]() and use the median time value as the effective decryption

time. Figure 2 shows the difference between median

values as sample size increases. The number of samples required to

reach a stable decryption time is surprising small, requiring only 5

samples to give a variation of under

and use the median time value as the effective decryption

time. Figure 2 shows the difference between median

values as sample size increases. The number of samples required to

reach a stable decryption time is surprising small, requiring only 5

samples to give a variation of under ![]() cycles (approximately 8

microseconds), well under that needed to perform a successful attack.

cycles (approximately 8

microseconds), well under that needed to perform a successful attack.

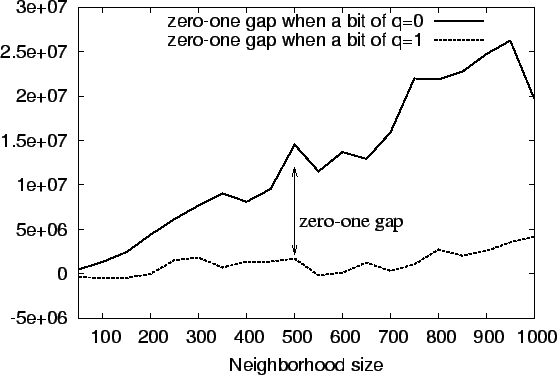

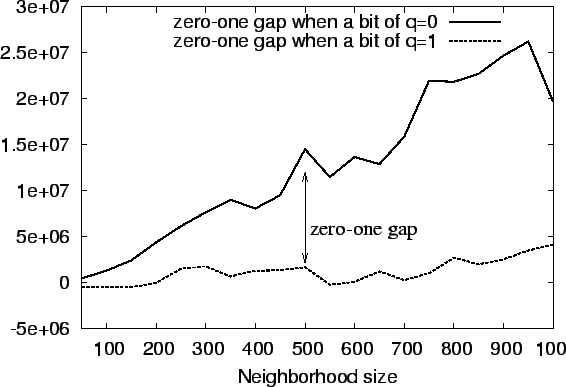

We call the gap between when a bit of ![]() is 0 and 1 the

zero-one gap. This gap is related to the difference

is 0 and 1 the

zero-one gap. This gap is related to the difference

![]() , which we expect to be large when a bit of q is 0 and

small otherwise. The larger the gap, the stronger the indicator that

bit

, which we expect to be large when a bit of q is 0 and

small otherwise. The larger the gap, the stronger the indicator that

bit ![]() is 0, and the smaller chance of error.

Figure 3 shows that increasing the neighborhood size

increases the size of the zero-one gap when a bit of

is 0, and the smaller chance of error.

Figure 3 shows that increasing the neighborhood size

increases the size of the zero-one gap when a bit of ![]() is 0, but

is steady when a bit of

is 0, but

is steady when a bit of ![]() is 1.

is 1.

The total number of queries to recover a factor is

![]() ,

where

,

where ![]() is the RSA public modulus. Unless explicitly stated

otherwise, we use a sample size of 7 and a neighborhood size of 400 on

all subsequent experiments, resulting in

is the RSA public modulus. Unless explicitly stated

otherwise, we use a sample size of 7 and a neighborhood size of 400 on

all subsequent experiments, resulting in ![]() total queries. With

these parameters a typical attack takes approximately 2 hours. In

practice, an effective attack may need far fewer samples, as the

neighborhood size can be adjusted dynamically to give a clear zero-one

gap in the smallest number of queries.

total queries. With

these parameters a typical attack takes approximately 2 hours. In

practice, an effective attack may need far fewer samples, as the

neighborhood size can be adjusted dynamically to give a clear zero-one

gap in the smallest number of queries.

|

|

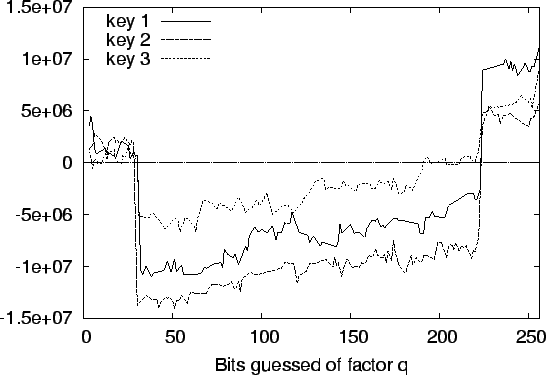

We attacked several 1024-bit keys, each randomly generated, to

determine the ease of breaking different moduli. In each case we were

able to recover the factorization of ![]() . Figure 4

shows our results for 3 different keys. For clarity, we include only

bits of

. Figure 4

shows our results for 3 different keys. For clarity, we include only

bits of ![]() that are 0, as bits of q that are 1 are close to the

that are 0, as bits of q that are 1 are close to the

![]() -axis. In all our figures the time difference

-axis. In all our figures the time difference

![]() is the zero-one gap. When the zero-one gap for bit

is the zero-one gap. When the zero-one gap for bit ![]() is far from

the

is far from

the ![]() -axis we can correctly deduce that bit

-axis we can correctly deduce that bit ![]() is 0.

is 0.

With all keys the zero-one gap is positive for about the first 32 bits

due to Montgomery reductions, since both ![]() and

and ![]() use

Karatsuba multiplication. After bit 32, the difference between

Karatsuba and normal multiplication dominate until overcome by the

sheer size difference between

use

Karatsuba multiplication. After bit 32, the difference between

Karatsuba and normal multiplication dominate until overcome by the

sheer size difference between

![]() . The size difference alters the zero-one gaps because as

bits of

. The size difference alters the zero-one gaps because as

bits of ![]() are guessed,

are guessed, ![]() becomes smaller while

becomes smaller while ![]() remains

remains

![]() . The size difference counteracts the effects of

Karatsuba vs. normal multiplication. Normally the resulting zero-one

gap shift happens around multiples of 32 (224 for key 1, 191 for key 2

and 3), our machine word size. Thus, an attacker should be aware that

the zero-one gap may flip signs when guessing bits that are around

multiples of the machine word size.

. The size difference counteracts the effects of

Karatsuba vs. normal multiplication. Normally the resulting zero-one

gap shift happens around multiples of 32 (224 for key 1, 191 for key 2

and 3), our machine word size. Thus, an attacker should be aware that

the zero-one gap may flip signs when guessing bits that are around

multiples of the machine word size.

As discussed previously we can increase the size of the neighborhood

to increase

![]() , giving a stronger indicator.

Figure 5 shows the effects of increasing the

neighborhood size from 400 to 800 to increase the zero-one gap,

resulting in a strong enough indicator to mount a successful attack on

bits 190-220 of

, giving a stronger indicator.

Figure 5 shows the effects of increasing the

neighborhood size from 400 to 800 to increase the zero-one gap,

resulting in a strong enough indicator to mount a successful attack on

bits 190-220 of ![]() in key 3.

in key 3.

The results of this experiment show that the factorization of each key

is exposed by our timing attack by the zero-one gap created by the

difference when a bit of ![]() is 0 or 1. The zero-one gap can be

increased by increasing the neighborhood size if hard-to-guess bits

are encountered.

is 0 or 1. The zero-one gap can be

increased by increasing the neighborhood size if hard-to-guess bits

are encountered.

In this experiment we show how the computer architecture and common compile-time optimizations can affect the zero-one gap in our attack. Previously, we have shown how algorithmically the number of extra Montgomery reductions and whether normal or Karatsuba multiplication is used results in a timing attack. However, the exact architecture on which decryption is performed can change the zero-one gap.

To show the effect of architecture on the timing attack, we begin by showing the total number of instructions retired agrees with our algorithmic analysis of OpenSSL's decryption routines. An instruction is retired when it completes and the results are written to the destination [8]. However, programs with similar retirement counts may have different execution profiles due to different run-time factors such as branch predictions, pipeline throughput, and the L1 and L2 cache behavior.

We show that minor changes in the code can change the timing attack in two programs: ``regular'' and ``extra-inst''. Both programs time local calls to the OpenSSL decryption routine, i.e. unlike other programs presented ``regular'' and ``extra-inst'' are not network clients attacking a network server. The ``extra-inst'' is identical to ``regular'' except 6 additional nop instructions inserted before timing decryptions. The nop's only change subsequent code offsets, including those in the linked OpenSSL library.

Table 1 shows the timing attack with both programs

for two bits of ![]() . Montgomery reductions cause a positive

instruction retired difference for bit 30, as expected. The difference

between Karatsuba and normal multiplication cause a negative

instruction retired difference for bit 32, again as expected.

However, the difference

. Montgomery reductions cause a positive

instruction retired difference for bit 30, as expected. The difference

between Karatsuba and normal multiplication cause a negative

instruction retired difference for bit 32, again as expected.

However, the difference

![]() does not follow the

instructions retired difference. On bit 30, there is about a 4

million extra cycles difference between the ``regular'' and

``extra-inst'' programs, even though the instruction retired count

decreases. For bit 32, the change is even more pronounced: the

zero-one gap changes sign between the ``normal'' and ``extra-inst''

programs while the instructions retired are similar!

Extensive profiling using Intel's VTune [6] shows no

single cause for the timing differences. However, two of the most

prevalent factors were the L1 and L2 cache behavior and the number of

instructions speculatively executed incorrectly. For example, while

the ``regular'' program suffers approximately

does not follow the

instructions retired difference. On bit 30, there is about a 4

million extra cycles difference between the ``regular'' and

``extra-inst'' programs, even though the instruction retired count

decreases. For bit 32, the change is even more pronounced: the

zero-one gap changes sign between the ``normal'' and ``extra-inst''

programs while the instructions retired are similar!

Extensive profiling using Intel's VTune [6] shows no

single cause for the timing differences. However, two of the most

prevalent factors were the L1 and L2 cache behavior and the number of

instructions speculatively executed incorrectly. For example, while

the ``regular'' program suffers approximately ![]() L1 and L2

cache misses per load from memory on average, ``extra-inst'' has

approximately

L1 and L2

cache misses per load from memory on average, ``extra-inst'' has

approximately ![]() L1 and L2 cache misses per load.

Additionally, the ``regular'' program speculatively executed about 9

million micro-operations incorrectly. Since the timing difference

detected in our attack is only about

L1 and L2 cache misses per load.

Additionally, the ``regular'' program speculatively executed about 9

million micro-operations incorrectly. Since the timing difference

detected in our attack is only about ![]() of total execution time,

we expect the run-time factors to heavily affect the zero-one

gap. However, under normal circumstances some zero-one gap should be

present due to the input data dependencies during decryption.

of total execution time,

we expect the run-time factors to heavily affect the zero-one

gap. However, under normal circumstances some zero-one gap should be

present due to the input data dependencies during decryption.

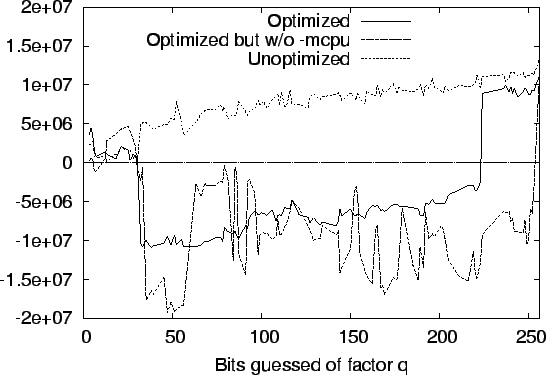

The total number of decryption queries required for a successful attack also depends upon how OpenSSL is compiled. The compile-time optimizations change both the number of instructions, and how efficiently instructions are executed on the hardware. To test the effects of compile-time optimizations, we compiled OpenSSL three different ways:

Each different compile-time optimization changed the zero-one gap.

Figure 6 compares the results of each test. For

readability, we only show the difference

![]() when

bit

when

bit ![]() of

of ![]() is 0 (

is 0 (

![]() ). The case where bit

). The case where bit ![]() shows

little variance based upon the optimizations, and the

shows

little variance based upon the optimizations, and the ![]() -axis can be used for

reference.

-axis can be used for

reference.

|

Recall we expected Montgomery reductions to dominate when guessing the

first 32 bits (with a positive zero-one gap), switching to Karatsuba

vs. normal multiplication (with a negative zero-one gap) thereafter.

Surprisingly, the unoptimized OpenSSL is unaffected by the Karatsuba

vs. normal multiplication. Another surprising difference is the

zero-one gap is more erratic when the

-mcpu flag is omitted.

In these tests we again made about 1.4 million decryption queries. We

note that without optimizations (-g), separate tests allowed us to

recover the factorization with less than 359000 queries. This number

could be reduced further by dynamically reducing the neighborhood size

as bits of ![]() are learned. Also, our tests of OpenSSL 0.9.6g were

similar to the results of 0.9.7, suggesting previous versions of

OpenSSL are also vulnerable.

One conclusion we draw is that users of binary crypto libraries may

find it hard to characterize their risk to our attack without complete

understanding of the compile-time options and exact execution

environment. Common flags such as enabling debugging support allow

our attack to recover the factors of a 1024-bit modulus in about

are learned. Also, our tests of OpenSSL 0.9.6g were

similar to the results of 0.9.7, suggesting previous versions of

OpenSSL are also vulnerable.

One conclusion we draw is that users of binary crypto libraries may

find it hard to characterize their risk to our attack without complete

understanding of the compile-time options and exact execution

environment. Common flags such as enabling debugging support allow

our attack to recover the factors of a 1024-bit modulus in about ![]() million queries. We speculate that less complex architectures

will be less affected by minor code changes, and have the zero-one gap

as predicted by the OpenSSL algorithm analysis.

million queries. We speculate that less complex architectures

will be less affected by minor code changes, and have the zero-one gap

as predicted by the OpenSSL algorithm analysis.

|

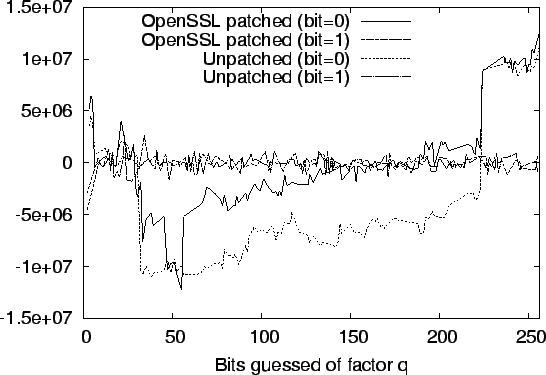

Source-based optimizations can also change the zero-one gap. RSA library developers may believe their code is not vulnerable to the timing attack based upon testing. However, subsequent patches may change the code profile resulting in a timing vulnerability. To show that minor source changes also affect our attack, we implemented a minor patch that improves the efficiency of the OpenSSL 0.9.7 CRT decryption check. Our patch has been accepted for future incorporation to OpenSSL (tracking ID 475).

After a CRT decryption, OpenSSL re-encrypts the result (mod ![]() ) and

verifies the result is identical to the original ciphertext. This

verification step prevents an incorrect CRT decryption from revealing

the factors of the modulus [2]. By default, OpenSSL

needlessly recalculates both Montgomery parameters

) and

verifies the result is identical to the original ciphertext. This

verification step prevents an incorrect CRT decryption from revealing

the factors of the modulus [2]. By default, OpenSSL

needlessly recalculates both Montgomery parameters ![]() and

and

![]() on

every decryption. Our minor patch allows OpenSSL to cache both values

between decryptions with the same key. Our patch does not affect any

other aspect of the RSA decryption other than caching these values.

Figure 7 shows the results of an attack both with

and without the patch.

on

every decryption. Our minor patch allows OpenSSL to cache both values

between decryptions with the same key. Our patch does not affect any

other aspect of the RSA decryption other than caching these values.

Figure 7 shows the results of an attack both with

and without the patch.

The zero-one gap is shifted because the resulting code will have a different execution profile, as discussed in the previous experiment. While our specific patch decreases the size of the zero-one gap, other patches may increase the zero-one gap. This shows the danger of assuming a specific application is not vulnerable due to timing attack tests, as even a small patch can change the run-time profile and either increase or decrease the zero-one gap. Developers should instead rely upon proper algorithmic defenses as discussed in section 6.

|

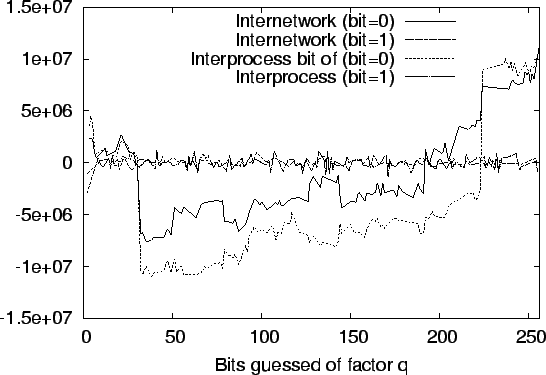

To show that local network timing attacks are practical, we connected two computers via a 10/100 Mb Hawking switch, and compared the results of the attack inter-process vs. inter-network. Figure 8 shows that the network does not seriously diminish the effectiveness of the attack. The noise from the network is eliminated by repeated sampling, giving a similar zero-one gap to inter-process. We note that in our tests a zero-one gap of approximately 1 millisecond is sufficient to receive a strong indicator, enabling a successful attack. Thus, networks with less than 1ms of variance are vulnerable.

|

Inter-network attacks allow an attacker to also take advantage of faster CPU speeds for increasing the accuracy of timing measurements. Consider machine 1 with a slower CPU than machine 2. Then if machine 2 attacks machine 1, the faster clock cycle allows for finer grained measurements of the decryption time on machine 1. Finer grained measurements should result in fewer queries for the attacker, as the zero-one gap will be more distinct.

We show that OpenSSL applications are vulnerable to our attack from the network. We compiled Apache 1.3.27 + mod_SSL 2.8.12 and stunnel 4.04 per the respective ``INSTALL'' files accompanying the software. Apache+mod_SSL is a commonly used secure web server. stunnel allows TCP/IP connections to be tunneled through SSL.

We begin by showing servers connected by a single switch are vulnerable to our attack. This scenario is relevant when the attacker has access to a machine near the OpenSSL-based server. Figure 9 shows the result of attacking stunnel and mod_SSL where the attacking client is separated by a single switch. For reference, we also include the results for a similar attack against the simple RSA decryption server from the previous experiments.

Interestingly, the zero-one gap is larger for Apache+mod_SSL than either the simple RSA decryption server or stunnel. As a result, successfully attacking Apache+mod_SSL requires fewer queries than stunnel. Both applications have a sufficiently large zero-one gap to be considered vulnerable.

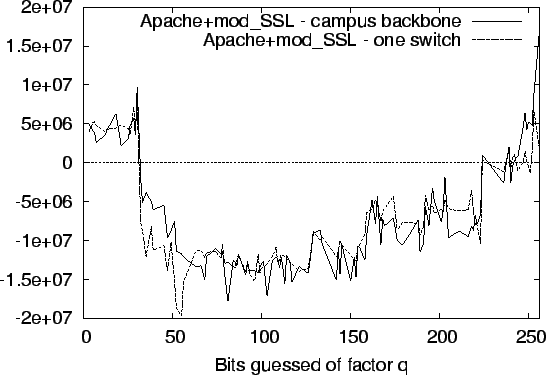

To show our timing attacks can work on larger networks, we separated the attacking client from the Apache+mod_SSL server by our campus backbone. The webserver was hosted in a separate building about a half mile away, separated by three routers and a number of switches on the network backbone. Figure 10 shows the effectiveness of our attack against Apache+mod_SSL on this larger LAN, contrasted with our previous experiment where the attacking client and server are separated by only one switch.

This experiment highlights the difficulty in determining the minimum number of queries for a successful attack. Even though both stunnel and mod_SSL use the exact same OpenSSL libraries and use the same parameters for negotiating the SSL handshake, the run-time differences result in different zero-one gaps. More importantly, our attack works even when the attacking client and application are separated by a large network.

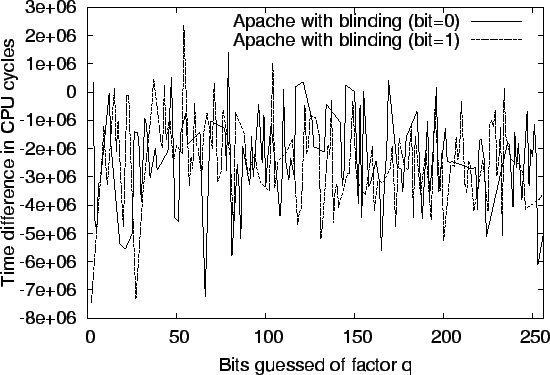

We discuss three possible defenses. The most widely accepted defense

against timing attacks is to perform RSA blinding. The RSA blinding

operation calculates

![]() before decryption, where

before decryption, where ![]() is random,

is random, ![]() is the RSA encryption exponent, and

is the RSA encryption exponent, and ![]() is the

ciphertext to be decrypted.

is the

ciphertext to be decrypted. ![]() is then decrypted as normal, followed

by division by

is then decrypted as normal, followed

by division by ![]() , i.e.

, i.e. ![]() . Since

. Since ![]() is random,

is random, ![]() is

random and timing the decryption should not reveal information about

the key. Note that

is

random and timing the decryption should not reveal information about

the key. Note that ![]() should be a new random number for every

decryption. According to [17] the performance

penalty is

should be a new random number for every

decryption. According to [17] the performance

penalty is ![]() , depending upon implementation.

Netscape/Mozilla's NSS library uses blinding. Blinding is available

in OpenSSL, but not enabled by default in versions prior to 0.9.7b.

Figure 11 shows that blinding in OpenSSL 0.9.7b

defeats our attack. We hope this paper demonstrates the necessity of

enabling this defense.

, depending upon implementation.

Netscape/Mozilla's NSS library uses blinding. Blinding is available

in OpenSSL, but not enabled by default in versions prior to 0.9.7b.

Figure 11 shows that blinding in OpenSSL 0.9.7b

defeats our attack. We hope this paper demonstrates the necessity of

enabling this defense.

|

Two other possible defenses are suggested often, but are a second

choice to blinding. The first is to try and make all RSA decryptions

not dependent upon the input ciphertext. In OpenSSL one would use

only one multiplication routine and always carry out the extra

reduction in Montgomery's algorithm, as proposed by Schindler

in [18]. If an extra reduction is not needed, we

carry out a ``dummy'' extra reduction and do not use the result.

Karatsuba multiplication can always be used by calculating

![]() , where

, where ![]() is the ciphertext,

is the ciphertext, ![]() is one of the RSA

factors, and

is one of the RSA

factors, and

![]() . After

decryption, the result is divided by

. After

decryption, the result is divided by

![]() to

yield the plaintext. We believe it is harder to create and maintain

code where the decryption time is not dependent upon the

ciphertext. For example, since the result is never used from a dummy

extra reduction during Montgomery reductions, it may inadvertently be

optimized away by the compiler.

to

yield the plaintext. We believe it is harder to create and maintain

code where the decryption time is not dependent upon the

ciphertext. For example, since the result is never used from a dummy

extra reduction during Montgomery reductions, it may inadvertently be

optimized away by the compiler.

Another alternative is to require all RSA computations to be quantized, i.e. always take a multiple of some predefined time quantum. Matt Blaze's quantize library [1] is an example of this approach. Note that all decryptions must take the maximum time of any decryption, otherwise, timing information can still be used to leak information about the secret key.

Currently, the preferred method for protecting against timing attacks is to use RSA blinding. The immediate drawbacks to this solution is that a good source of randomness is needed to prevent attacks on the blinding factor, as well as the small performance degradation. In OpenSSL, neither drawback appears to be a significant problem.

We devised and implemented a timing attack against OpenSSL -- a library commonly used in web servers and other SSL applications. Our experiments show that, counter to current belief, the timing attack is effective when carried out between machines separated by multiple routers. Similarly, the timing attack is effective between two processes on the same machine and two Virtual Machines on the same computer. As a result of this work, several crypto libraries, including OpenSSL, now implement blinding by default as described in the previous section.

This material is based upon work supported in part by the National Science Foundation under Grant No. 0121481 and the Packard Foundation. We thank the reviewers, Dr. Monica Lam, Ramesh Chandra, Constantine Sapuntzakis, Wei Dai, Art Manion and CERT/CC, and Dr. Werner Schindler for their comments while preparing this paper. We also thank Nelson Bolyard, Geoff Thorpe, Ben Laurie, Dr. Stephen Henson, Richard Levitte, and the rest of the OpenSSL, mod_SSL, and stunnel development teams for their help in preparing patches to enable and use RSA blinding.

|

This paper was originally published in the

Proceedings of the 12th USENIX Security Symposium,

August 4–8, 2003,

Washington, DC, USA

Last changed: 5 Aug. 2003 aw |

|