|

In this section we compare the effectiveness of ASK and other popular anti-spam alternatives.

We selected five sets, from a total of 7000 emails, to evaluate the tools. We used sets spam1 and non-spam1, containing 1000 messages each, to test the content-filtering and RBL tools. We used sets spam2 and non-spam2, containing 2000 messages each, to train the bayesian classifier. We used a larger sample during the training phase as it improved the effectiveness of Bogofilter considerably. We used set spam3 to test the effectiveness of a challenge-based approach.

Sets spam1, non-spam1, spam2, and non-spam2 were donated by ASK users. We collected set spam3 from SpamArchive [2]. Set spam3 contains no messages previously queued by ASK as those are by definition unconfirmed.

Table 2 compares the effectiveness of different tools when dealing with spam. nFN represents the number of false negatives (known spam classified as a valid mail). nFP represents the number of false positives (valid mail classified as spam). %FN and %FP represent the percentage of false negatives and false positives, respectively.

We distributed RBL providers in three groups, named ``RBL Group 1,'' ``RBL Group 2,'' and ``RBL Group 3.'' The first group contains only one RBL provider and represents the minimum protection case. The second group contains a more reasonable mix with three distinct RBL providers. Group 3 represents an extreme case with nine RBL providers. The composition of these groups is listed in Appendix A.

We tested DCC using two different thresholds (number of reports for a message to be considered spam). In the first test, we considered one report enough to mark the message as spam. The second test used a more conservative approach where five reports are needed as opposed to one.

Bogofilter proved to be the most effective content-filtering tool in its category, followed by SpamAssassin. The percentage of false positives is very close for these two tools. DCC had a considerable percentage of false negatives but presents no false positives.

Using one RBL proved to be almost 100% ineffective in our tests, with only three spam emails detected correctly. Raising the number of RBLs checked to three resulted in a 65.2% rate of false negatives, but also raised the percentage of false positives to 1.6%. Group 3 represents an extreme case, with nine RBLs being checked. Even under these circumstances, a high mark of 42% false negatives was verified, with a non-viable mark of 30.8% false positives. Of all solutions tested, RBLs were visibly the worst performers.

To test ASK's effectiveness, we sent 1000 confirmations with a specially crafted envelope address and sender (to catch bounces and replies). We waited for one week for confirmations to return. The results can be seen on Table 3.

|

Of the 18 responses received, 14 were from one specific online marketing company and obviously unsolicited. The remaining four came from bargain notification services, apparently sent after the user susbcribed to their services. Only three replies kept the original confirmation code in the message subject (without which, no message delivery takes place), bringing the rate of false negatives to 0.3%. Even if we assume that all spammers could keep the ``Subject'' header intact, we will still have a rate of false negatives of only 1.8%.

False positives in a challenge-authentication system occur when valid senders do not reply to confirmation messages. Systematic determination of the false positive rate in a challenge-based authentication tool is a difficult task. A confirmation message would have to be re-sent to known valid senders, causing all sorts of problems. Also, some senders who replied to the first confirmation could get confused by the second confirmation request and ignore it completely.

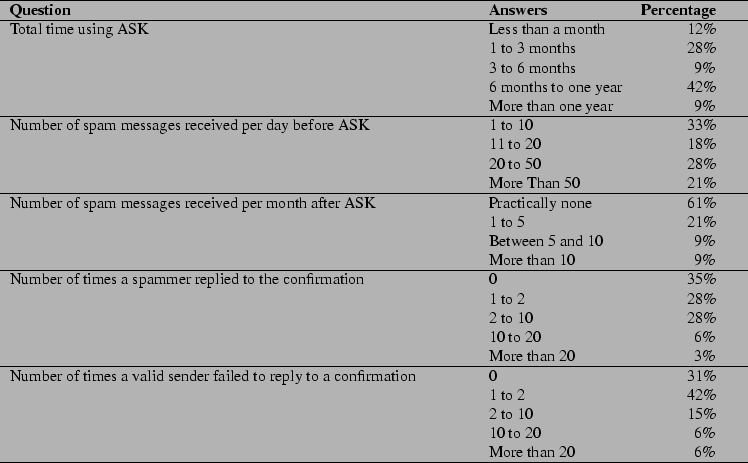

In an effort to understand other users' experiences with spam and how ASK performs in their environments, we submitted a simple survey [1] to the main ASK mailing-list during March of 2003. A total of 32 users responded. The results can be seen in Table 4.

The results indicate that most users have been using ASK from 6 months to one year, with a substantial amount of new users in the last three months.

Most ASK users received 10 to 20 spam messages a day (33%), with a sizable part receiving 20 to 50 (28%) spam messages a day. It is interesting to note that the distribution is fairly even across the categories, indicating that ASK caters to all classes of users when it comes to amount of spam received.

After beginning to use ASK, 61% of the users report that practically no spam is present, and 21% report that only 1 to 5 spam messages are received per month. This brings the total users with a substantial reduction of spam to 82%.

One important aspect of the survey is to verify whether spammers reply to confirmation messages or not. 35% reported no cases of spam delivered due to this reason while 28% reported one or two cases. This brings the total percentage of users with less than three cases of spammers replying to confirmations to 63%. Another 28% reported between two and ten cases since ASK was installed, which still can be considered a good mark considering that most users seem to be using ASK for more than six months.

Another point we tried to clarify is the possibility of valid users not responding to the confirmations (false positives). Most users (42%) reported very few cases of false positives (1 to 2). 31% reported no cases at all. These results combined indicate that 73% of those who responded to the survey had no significant problems with false positives. This seems to indicate that, as a rule, valid senders tend to reply to confirmation messages.

ASK requires a Unix/Linux compatible operating system. ASK was written in Python and should run with no or few modifications under most Unix variants.

ASK is compatible with most major MTAs available today, including Sendmail, Qmail, Postfix, and Exim. Procmail support is also available. Any MTA capable of delivering to a pipe should work without problems. ASK natively supports mbox and Maildir mailbox formats, with MH planned.

Incoming mails are read on the standard input, making it possible to cascade ASK with other anti-spam solutions like SpamAssassin or a multitude of Bayesian filters and RBLs.

To evaluate ASK's performance, we selected a set of 1000 spam messages contributed by ASK users, totaling 14MB of data. On the average, each message has 14KB and 457 lines of text. Text lines have an average length of 31 bytes.

We configured ASK to send a confirmation to each message. ASK took 524 seconds (on a Pentium III/500MHz workstation) to process all messages, bringing the average processing overhead to 0.52 seconds per email received.

During this test, we tried different logging levels (0, 1, 10) and different list sizes (5, 100, 500 lines). No significant variation was detected in the results.

To measure the network overhead, we configured ASK to keep the first 50 lines of each message in the confirmation. This resulted in an average size of 3.3KB per confirmation message, with two languages selected, totaling 3.3MB of extra network traffic. We estimate the overhead of confirmation bounces to be around 2MB, but it can be avoided by configuring the system to send confirmation messages with a null ``Return-Path.''

Another area of interest is the overhead in processing remote commands. ASK took 30 seconds to process a queue with 1000 messages. This is the most expensive remote command, as every file in the queue has to be opened and investigated. Proper queue maintenance should keep the number of queued files well under 1000, reducing considerably the time for this operation.

The CPU overhead is acceptable for most sites, but something to be considered for high volume servers. The network traffic overhead should be of no concern unless network service is paid by volume. If this is the case, a few bandwidth reduction measures can be taken, such as reducing the number of lines quoted from the original message in the confirmation or limiting confirmation messages to only one language.