|

Chris Karlof

J.D. Tygar

David Wagner

{ckarlof, tygar, daw}@cs.berkeley.edu

University of California, Berkeley

In contrast, with email registration links, the unsafe actions a user must avoid are different from the action she must take to legitimately register her computer, i.e., click on the registration link. Once a user clicks on a registration link and her browser sends it to the legitimate server, it becomes useless to an attacker.

In addition, a web site can include a reminder in the registration email of the only safe action (i.e., click on the link) and warn against the likely attacks. Although a web site using challenge questions can issue similar warnings, these warnings will likely be absent during an attack.

For these reasons, we hypothesize that using email registration links is more resistant to these types of MITM social engineering attacks than challenge questions. To test this hypothesis, our study will compare the success of different simulated MITM attacks against email registration links and challenge questions.

First, it is difficult to simulate the experience of risk for users without crossing ethical boundaries [16]. To address this, many experimenters employ role-playing, where users are asked to assume fictitious roles. However, role-playing participants may act differently than they would in the real world. If users feel that nothing is at stake and there are no consequences to their actions, they may take risks that they would avoid if their own personal information was at stake.

Second, we must limit the effect of demand characteristics. Demand characteristics refer to cues which cause participants to try to guess the study's purpose and change their behavior in some way, perhaps unintentionally. For example, if they agree with the hypothesis of the study, they may change their behavior in a way which tries to confirm it. Since security is often not users' primary goal, demand characteristics are particularly challenging for security studies. An experiment which intentionally or unintentionally influences users to pay undue attention to the security aspects of the tasks will reduce its ecological validity.

Third, we must minimize the impact of authority figures during the study. Researchers have shown that people have a tendency to obey authority figures and the presence of authority figures causes study participants to display extreme behavior they would not normally engage in on their own. Classic examples of this are Milgram's "shocking" experiment [19] and the Stanford prison experiment [11]. For security studies, this tendency may underestimate the strength of some defense mechanisms and overestimate the success rate of some attacks. For example, if we simulate a social engineering attack during the study, users may be more susceptible to adversarial suggestions because they misinterpret these to be part of the experimenter's instructions. They may fear looking incompetent or stubborn if they do not follow the instructions correctly. This problem may be exacerbated if there is an experimenter lurking nearby.

Fourth, we must identify an appropriate physical location for the experiment. The vast majority of previous security user studies simulating attacks have been conducted in a controlled laboratory environment. They are many advantages to a laboratory environment: the experimenter can control more variables, monitor more subtle user behavior, and debrief and interview participants immediately upon completion, while the study is still fresh in their minds. But a laboratory environment for a security study can cause users to evaluate risk differently than they would in the real world. A user may view the laboratory environment as safer because they feel that the experimenter "wouldn't let anything bad happen".

It may be tempting to ignore some or all of these issues in a comparative study such as ours. Since the effects of these factors will be present in both the control group (i.e., challenge question users) and the treatment group (i.e, email registration users), then one might conclude that ignoring these factors would not hinder a valid, realistic comparison between the two groups.

This is a dangerous conclusion. It is not clear to what degree these issues affect various types of security-related mechanisms. In particular, there is no evidence that these issues have a similar magnitude of effect on challenge question users as on email registration users. Therefore, it is prudent to control these issues in our design as much as possible.

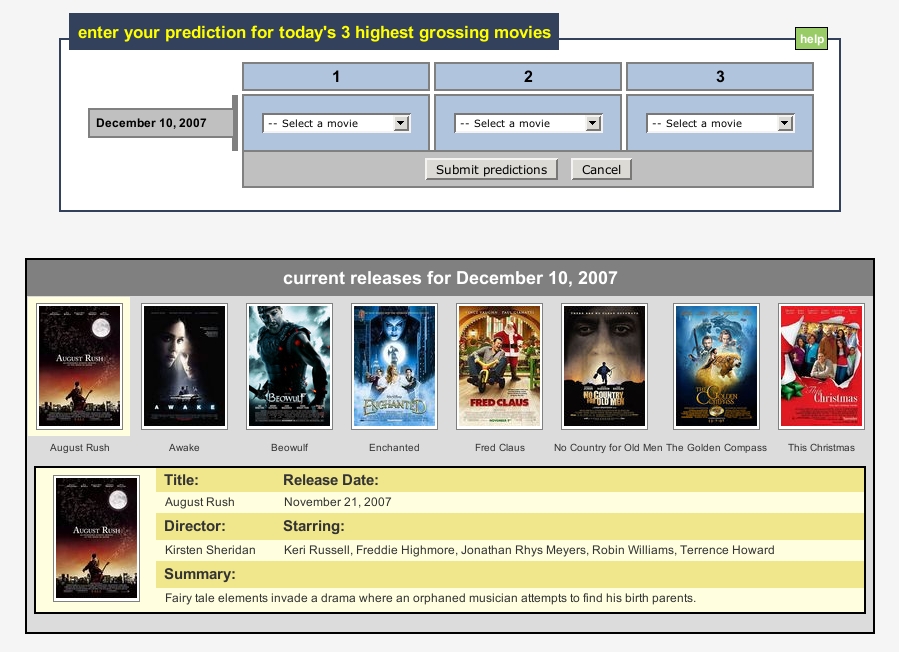

Each user receives $20 as base compensation, and we reward her up to an additional $3 per prediction depending on the accuracy of her predictions. We tell each user that she must make seven predictions to complete the experiment, so the total maximum a user can receive is $41. Users receive their compensation via PayPal upon completion.2

We plan to recruit approximately 200 users, divided into 5 groups. One group uses challenge questions for registration and the other four groups use different variants of email registration links. We discuss the email registration groups further in Section 5.3.2. We show a summary of the different groups in Table 1.

|

If the user chooses to register her computer, we redirect her to the registration page. If she is in the challenge question group, we prompt her to set up her challenge questions with the dialog shown in Figure 3. She must select two questions and provide answers. After confirming the answers, she enters her account and proceeds with her first prediction.

If she is part of an email registration group, then she sees a page informing her that she has been sent a registration email, and she must click on the link saying "Click on this secure link to register your computer". After clicking on the link, she can enter her account and make a prediction. We send registration emails in primarily HTML format, but also include a plain text alternative (using the multipart/alternative content type) for users who have HTML viewing disabled in their email clients. We embed the same registration link in both parts, but include a distinguishing parameter in the link to record whether the user was presented with the HTML or plain text version of the email. We discuss how we use this information in Section 5.3.2. Screenshots of registration emails are shown in Figures 6-9.

Both registration procedures set an HTTP cookie and a Flash Local Shared Object on the user's computer to indicate the computer is registered. On subsequent login attempts from that computer, the user gains access to her account by simply entering her username and password. But if she logs in from a computer we don't recognize, then we prompt her to answer her challenge questions (Figure 4) or send her a new registration link to click on, depending on the user's group.

Instead, we use social engineering attacks that attempt to steal and use a valid registration link before the user clicks on it, giving the attacker a valid registration token for the user's account. Since this link has no value outside the scope of the study, this limits the potential risk to users.

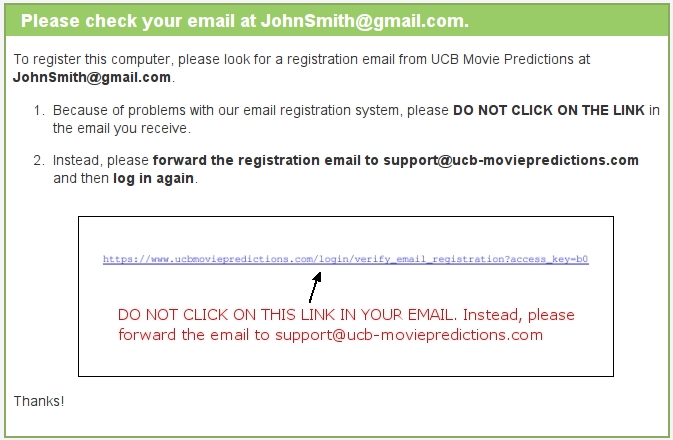

We identified two compelling and straightforward attacks of this type. Our first attack asks the user to copy and paste the registration URL from her email into a text box included in the attack web page. Our second attack asks the user to forward the registration email to an address with a similar looking domain. Although we have no evidence these are the most effective attack strategies, it is certainly necessary for an email registration scheme to resist these attacks in order to be considered secure.

We simulate the copy and paste attack against groups 2 and 3, and simulate the forwarding attack against groups 4 and 5. For both attacks we assume the attacker can cause a new registration email to be delivered to the user from the legitimate site.

For both attacks, the attack page first tells the user that "because of problems with our email registration system" she should not click on the link in the email she receives. For the forwarding attack, it instructs the user to forward the email to the attacker's email address. For the copy and paste attack, the attack page presents a text box with a "submit" button and instructs the user to copy and paste the registration link into the box.

Both attacks also present pictorial versions of the instructions, with a screenshot of how the registration link appears in the email. To maximize the effectiveness of this picture, we give the attacker the advantage of knowing the distribution of HTML and plain text registration emails previously viewed by the user during the study (see Section 5.2). The attacker then displays the pictorial instructions corresponding to the majority; in case of a tie it displays a screenshot of the HTML version. Screenshots of these attacks are shown in Figures 10-13.

If a group 2-5 user clicks on the registration link first, then we consider the attack a failure.4 If the user forwards the email or submits the link first, then we consider the attack a success. Either way, the experiment ends.

For all users, attempts to navigate to other parts of the site redirect the user back to the attack page. If the user resists the attack for 30 minutes, then on her next login, the experiment ends and we consider the attack a failure. The attack pages for groups 1-3 contain a Javascript key logger, in case a user begins to answer her challenge questions or enter the link, but then changes her mind and does not submit. If our key logger detects this, we consider the attack a success. We measure the "strength" of the registration mechanism in each group by calculating the percentage of the users which resist the attack.

We simulate the experience of risk by giving users password-protected accounts at our web site and creating an illusion that money they "win" during the study is "banked" in these accounts. To suggest that there is a chance that the user's compensation could be stolen if her account is hijacked, we provide an "account management" page which allows the user to change the PayPal email address associated with her account. However, these incentives have limitations. Users may still consider the overall risk to be low: the size of the compensation may not be large enough to warrant extra attention, or they may recognize that a small university research study is unlikely to be the target of attack.

Informal testing suggests that predicting popular movies can be fun and engaging for users. In addition, the financial incentive for making accurate predictions will help focus the users' attention away from the security aspects of the study. For these reasons, the effect of demand characteristics is sharply diminished in our study.

We minimize the impact of authority figures by requiring the users to participate remotely, from their own computers and at times of their choosing. Users never need to meet us or make any decisions in a laboratory environment. All instructions are sent through our web site and emails. Removing authority figures from the vicinity of users improves the validity of our study. Similarly, by requiring users to interact with our web site outside a laboratory, we increase the chances users evaluate risks similarly to the way they do in the real world. Nonetheless, we acknowledge that our study design is not perfect: there is some risk that users may interpret instructions from the simulated attackers as "orders" from us, the experimenters.

Our study is ethical. The risk to users during the study is minimal, and for us to use a user's data, she must explicitly reconsent after a full debriefing on the true nature of the study. The study protocol described here was approved by the UC Berkeley's Institutional Review Board on human experimentation.

To help create the experience of risk, Schecter et al. asked real Bank of America SiteKey customers to log into their accounts from a laptop in a classroom [20]. Although SiteKey uses challenge questions, this study did not evaluate SiteKey's use of them. Instead, this study focused on whether each user would enter her online banking password in the presence of clues indicating her connection was insecure. They simulated site-forgery attacks against each user by removing various security indicators (e.g., her personalized SiteKey image) and causing certificate warnings to appear, and checked if each user would still enter her password. Since SiteKey will only display a user's personalized image after her computer is registered, Schecter et al. first required each user to answer her challenge questions during a "warm-up" task to re-familiarize her with the process of logging into her bank account. No attack was simulated against the users during this task.

Requiring users to use their own accounts is certainly a good start for creating a sense of risk, but the degree to which the academic setting of the study affected the users' evaluation of their actual risk is unclear. Although the experimenters were not in the same room as the users while they used the computer, the fact that they were nearby may have influenced the users to appear "helpful" and behave with less caution than they normally would.

Jagatic et al. [15] and Jakobsson et al. [16] designed phishing studies which remotely simulated phishing attacks against users without their prior consent. Although these experiments simulate real attacks and achieve a high level of ecological validity, not obtaining prior consent raises ethical issues. After learning that they were unknowing participants in one study [15], many users responded with anger and some threatened legal action [5].

In a real world attack, a pharmer would most likely not be able to obtain a valid certificate for the target site and not initiate an SSL connection with users; otherwise, users would see a certificate warning. Since our hypothesis is that email registration is more secure than challenge questions, we must ensure that this artificial restriction does not bias the results against challenge questions. Our solution is to maximize the benefits of SSL for the challenge question users and minimize the benefits of SSL for the email registration users. To do this, we assume a potential adversary attacking email registration has obtained a valid certificate for the target domain while a potential adversary attacking challenge question based registration has not obtained a valid certificate. This means group 2-5 users do not see certificate warnings during the attack, but group 1 users do. We implement this by redirecting group 1 users to a different Apache instance (at port 8090) with a self-signed certificate, while group 2-5 users continue to use the original Apache instance in "attack mode". This means the "attack" domain shown in the URL bar for group 1 users will contain a port number, but the "attack" domain for group 2-5 users will not.

Our exit survey starts with general demographic questions such as gender, age range, and occupational area. The second section of the survey collects information on the user's general computing background such as her primary operating system, primary web browser, average amount of time she uses a web browser per week, what kind of financial transactions she conducts online, and how long she has conducted financial transactions online.

The final part of the survey asks more specific questions about the user's experiences during the study. One of our goals is to determine how much risk the user perceived while using our site. Since risk is subjective, we ask each user to think about the general security concerns she has while browsing the World Wide Web and the precautions she takes to protect herself when logging into web sites. Then, we ask her to rank how often and thoroughly she applies precautions when logging into the following types of web sites: banking, shopping, PayPal, web email, social networking, and our study site.

Our second goal is to probe each user's thought process during the simulated attack. We ask her if she ever saw anything suspicious or dangerous during her interactions with our site, and if she did, what she did in response (if anything). On the next page, we show her a screenshot of the attack, and we ask her if she remembers seeing this page. If she did, we ask her whether she followed the instructions on the attack page and to explain the reasoning behind her decision.

Our final goal is to understand each user's general impressions of machine authentication. We ask each user to describe how she thinks registration works and what security benefits she thinks it provides, if any. We also ask her to quantitatively compare the security and convenience of using machine authentication in conjunction with passwords to using passwords alone.

|

|

|

This document was generated using the LaTeX2HTML translator Version 2002-2-1 (1.70)

Copyright © 1993, 1994, 1995, 1996,

Nikos Drakos,

Computer Based Learning Unit, University of Leeds.

Copyright © 1997, 1998, 1999,

Ross Moore,

Mathematics Department, Macquarie University, Sydney.

The command line arguments were:

latex2html -split 0 -show_section_numbers -local_icons -no_navigation study-design.tex

The translation was initiated by Chris Karlof on 2008-03-18