16th USENIX Security Symposium

Pp. 167–182 of the Proceedings

BotHunter: Detecting Malware Infection Through IDS-Driven Dialog Correlation

| Guofei Gu1, Phillip Porras2, Vinod Yegneswaran2, Martin Fong2, Wenke Lee1 |

| 1College of Computing | 2Computer Science Laboratory |

| Georgia Institute of Technology | SRI International |

| 266 Ferst Drive | 333 Ravenswood Avenue |

| Atlanta, GA 30332 | Menlo Park, CA 94025 |

Abstract

We present a new kind of network perimeter monitoring strategy, which

focuses on recognizing the infection and coordination dialog that

occurs during a successful malware infection. BotHunter is an

application designed to track the two-way communication flows between

internal assets and external entities, developing an evidence trail of

data exchanges that match a state-based infection sequence model.

BotHunter consists of a correlation engine that is driven by three

malware-focused network packet sensors, each charged with detecting

specific stages of the malware infection process, including inbound

scanning, exploit usage, egg downloading, outbound bot coordination

dialog, and outbound attack propagation. The BotHunter correlator

then ties together the dialog trail of inbound intrusion alarms with

those outbound communication patterns that are highly indicative

of successful local host infection. When a sequence of evidence is

found to match BotHunter's infection dialog model, a consolidated

report is produced to capture all the relevant events and event

sources that played a role during the infection process. We refer to

this analytical strategy of matching the dialog flows between internal

assets and the broader Internet as dialog-based correlation, and

contrast this strategy to other intrusion detection and alert

correlation methods. We present our experimental results using

BotHunter in both virtual and live testing environments, and discuss

our Internet release of the BotHunter prototype. BotHunter is made

available both for operational use and to help stimulate research in

understanding the life cycle of malware infections.

1 Introduction

Over the last decade, malicious software or malware has risen

to become a primary source of most of the

scanning [38], (distributed) denial-of-service (DOS)

activities [28], and direct attacks [5],

taking place across the

Internet. Among the various forms of malicious software, botnets

in particular have recently distinguished themselves to be among the

premier threats to computing assets [20]. Like the

previous generations of computer viruses and worms, a bot is a

self-propagating application

that infects vulnerable hosts through direct exploitation or

Trojan insertion. However, all bots distinguish themselves from the

other malware forms by their ability to establish a command and

control (C&C) channel through which they can be updated and

directed. Once collectively under the control of a C&C server,

bots form what is referred to as a botnet. Botnets are

effectively a collection of slave computing and data assets to be sold

or traded for a variety of illicit activities, including information

and computing resource theft, SPAM production, hosting phishing

attacks, or for mounting distributed denial-of-service (DDoS)

attacks [12,34,20].

Network-based intrusion detection systems (IDSs) and intrusion

prevention systems (IPSs) may come to mind as the most appealing

technology for detecting and mitigating botnet threats. Traditional

IDSs, whether signature based [30,35] or

anomaly based [46,8], typically focus on inbound

packets flows for signs of malicious point-to-point intrusion

attempts. Network IDSs have the capacity to detect initial incoming

intrusion attempts, and the prolific frequency with which they produce

such alarms in operational networks is well

documented [36]. However, distinguishing a

successful local host infection from the daily myriad of scans and

intrusion attempts is as critical and challenging a task as any facet

of network defense.

Intrusion report correlation enables an analyst to obtain higher-level

interpretations of network sensor alert streams, thereby alleviating

noise-level issues with traditional network IDSs. Indeed, there is

significant research in the area of consolidating network security

alarms into coherent incident pictures. One major vein of research in

intrusion report correlation is that of alert fusion, i.e.,

clustering similar events under a single label [42]. The

primary goal of fusion is log reduction, and in most systems

similarity is based upon either attributing multiple events to a

single threat agent or providing a consolidated view of a common set

of events that target a single victim. The bot infection problem

satisfies neither criterion. The bot infection process spans

several diverse transactions that occur in multiple directions and

potentially involves several active participants. A more applicable

area of alert correlation research is multistage attack recognition,

in which predefined scenario templates capture multiple state

transition sequences that may be initiated by multiple threat

agents [40,29]. In Section 3 we discuss why

predefined state transition models simply do not work well in bot

infection monitoring. While we argue that bot infections do regularly

follow a series of specific steps, we find it rare to accurately

detect all steps, and find it equally difficult to predict the order

and time-window in which these events are recorded.

Our Approach: We introduce an "evidence-trail"

approach to recognizing successful bot infections through the

communication sequences that occur during the infection process. We

refer to this approach as the infection dialog correlation

strategy. In dialog correlation, bot infections are modeled as a set

of loosely ordered communication flows that are exchanged between an

internal host and one or more external entities. Specifically,

we model all bots as sharing a common set of underlying actions that

occur during the infection life cycle: target scanning, infection

exploit, binary egg download and execution, command and control

channel establishment, and outbound scanning. We neither assume that

all these events are required by all bots nor that every event

will be detected by our sensor alert stream. Rather, our dialog

correlation system collects an evidence trail of relevant infection

events per internal host, looking for a threshold combination of

sequences that will satisfy our requirements for bot declaration.

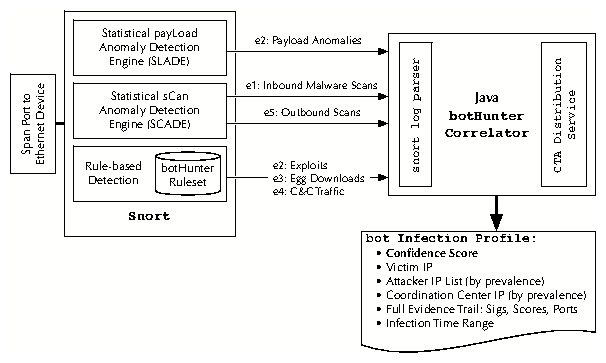

Our System: To demonstrate our methodology, we introduce a

passive network monitoring system called BotHunter, which

embodies our infection dialog correlation strategy. The BotHunter

correlator is driven by Snort [35] with a customized malware-focused ruleset,

which we further augment with two additional bot-specific

anomaly-detection plug-ins for malware analysis: SLADE and

SCADE. SLADE implements a lossy n-gram payload analysis of incoming

traffic flows, targeting byte-distribution divergences in selected

protocols that are indicative of common malware intrusions. SCADE

performs several parallel and complementary malware-focused port scan

analyses to both incoming and outgoing network

traffic. The BotHunter correlator associates inbound scan and

intrusion alarms with outbound communication patterns that are highly

indicative of successful local host infection. When a sufficient

sequence of alerts is found to match BotHunter's infection dialog

model, a consolidated report is produced to capture all the

relevant events and event participants that contributed to the

infection dialog.

Contributions: Our primary contribution in this paper is to

introduce a new network perimeter monitoring strategy, which focuses

on detecting malware infections (specifically bots/botnets) through

IDS-driven dialog correlation. We present an abstraction of the major

network packet dialog sequences that occur during a successful bot

infection, which we call our bot infection dialog model. Based

on this model we introduce three bot-specific sensors, and our

IDS-independent dialog correlation engine. Ours is the first

real-time analysis system that can automatically derive a profile of

the entire bot detection process, including the identification of the

victim, the infection agent, the source of the egg download, and the

command and control center.1 We also present our analysis of

BotHunter against more than 2,000 recent bot infection experiences, which

we compiled by deploying BotHunter both within a high-interaction

honeynet and through a VMware experimentation platform using recently

captured bots. We validate our infection sequence model by

demonstrating how our correlation engine successfully maps the network

traces of a wide variety of recent bot infections into our model.

The remainder of this paper is outlined as follows. In

Section 2 we discuss the sequences of communication exchanges

that occur during a successful bot and worm infection.

Section 3 presents our bot infection dialog model, and

defines the conditions that compose our detection requirements.

Section 4 presents the BotHunter architecture, and

Section 5 presents our experiments performed to assess

BotHunter's detection performance. Section 6 discusses

limitations and future work, and Section 7 presents related

work. Section 8 discusses our Internet release of the

BotHunter system, and in Section 9 we summarize our results.

2 Understanding Bot Infection Sequences

Understanding the full complexity of the bot infection life cycle is

an important challenge for future network perimeter defenses. From

the vantage point of the network egress position, distinguishing

successful bot infections from the continual stream of background

exploit attempts requires an analysis of the two-way dialog flow that

occurs between a network's internal hosts and the Internet. On a

well-administered network, the threat of a direct-connect exploit is

limited by the extent to which gateway filtering is enabled. However,

contemporary malware families are highly versatile in their ability to

attack susceptible hosts through email attachments, infected P2P

media, and drive-by-download infections. Furthermore, with the

ubiquity of mobile laptops and virtual private networks (VPNs), direct

infection of an internal asset need not necessarily take place across

an administered perimeter router. Regardless of how malware enters a

host, once established inside the network perimeter the challenge

remains to identify the infected machine and remove it as quickly as

possible.

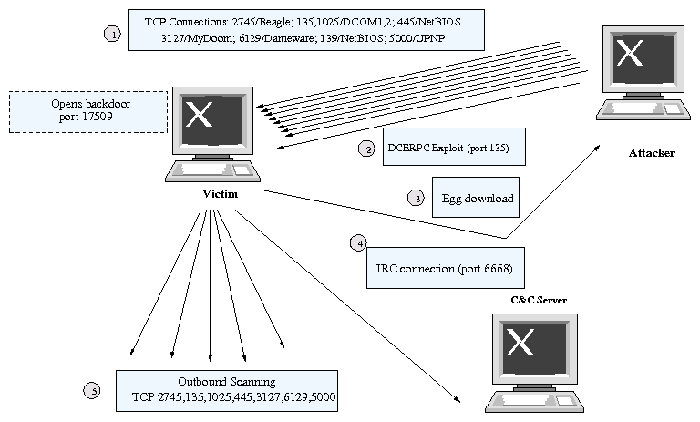

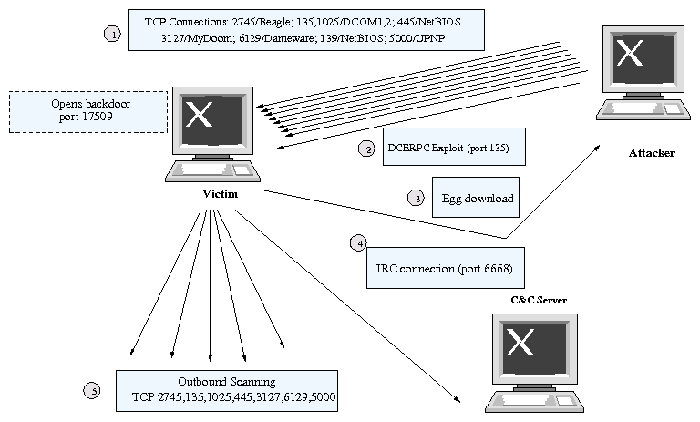

For this present study, we focus on a rather narrow aspect of bot

behavior. Our objective is to understand the sequence of network

communications and data exchanges that occur between a victim host and

other network entities. To illustrate the stages of a

bot infection, we outline an infection trace from one example bot, a

variant of the Phatbot (aka Gaobot) family [4].

Figure 1 presents a summary of communication exchanges

that were observed during a local host Phatbot infection.

As with many common bots that propagate through remote

exploit injection, Phatbot first (step 1) probes an address range in

search of exploitable network services or responses from Trojan

backdoors that may be used to enter and hijack the infected

machine. If Phatbot receives a connection reply to one of the targeted

ports on a host, it then launches an exploit or logs in to the host

using a backdoor. In our

experimental case, a Windows workstation replies to a 135-TCP (MS

DCE/RPC) connection request, establishing a connection that leads to

an immediate RPC buffer overflow (step 2). Once infected, the victim

host is directed by an upload shell script to open a communication

channel back to the attacker to download the full Phatbot binary

(step 3). The bot inserts itself into the system boot process, turns

off security software, probes the local network for additional NetBIOS

shares, and secures the host from other malware that may be

loaded on the machine. The infected victim next distinguishes itself

as a bot by establishing a connection to a botnet C&C server, which

in the case of Phatbot is established over an IRC channel (step 4).

Finally, the newly infected bot establishes a listen port to accept

new binary updates and begins scanning other external victims on

behalf of the botnet (step 5).

3 Modeling the Infection Dialog Process

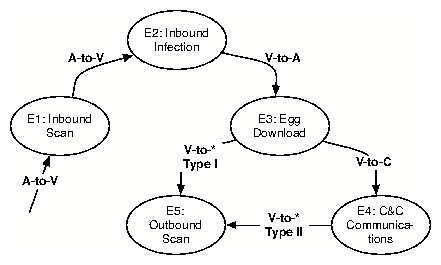

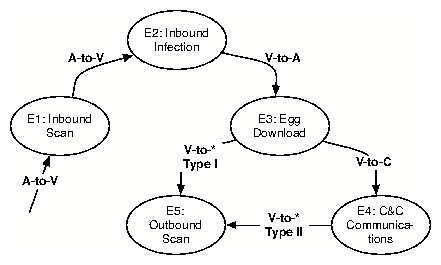

While Figure 1 presents an example of a specific bot,

the events enumerated are highly representative of the life cycle phases

that we encounter across the various bot families that

we have analyzed. Our bot propagation model is primarily driven by an

assessment of outward-bound communication flows that are indicative of

behavior associated with botnet coordination. Where possible, we seek

to associate such outbound communication patterns with observed

inbound intrusion activity. However, this latter

activity is not a requirement for bot declaration. Neither are

incoming scan and exploit alarms sufficient to declare a successful

malware infection, as we assume that a constant stream of scan

and exploit signals will be observed from the egress monitor.

We model an infection sequence as a composition of participants and a loosely

ordered sequence of exchanges: Infection I = < A, V, C, V',E,

D > , where A =

Attacker, V = Victim, E = Egg Download Location, C = C&C Server, and V' = the

Victim's next propagation target. D represents an infection dialog sequence

composed of bidirectional flows that cross the egress boundary. Our

infection dialog D is composed of a set of five potential

dialog transactions (E1, E2, E3, E4, E5), some subset of which

may be observed during an instance of a local host infection:

- E1: External to Internal Inbound Scan

- E2: External to Internal Inbound Exploit

- E3: Internal to External Binary Acquisition

- E4: Internal to External C&C Communication

- E5: Internal to External Outbound Infection Scanning

Figure 1: Phatbot Dialog Summary

Figure 2: Bot Infection Dialog Model

Figure 2 illustrates our bot infection dialog model used

for assessing bidirectional flows across the network boundary. Our

dialog model is similar to the model presented by Rajab et al. in

their analysis of 192 IRC bot instances [33]. However, the

two models differ in ways that arise because of our specific perspective

of egress boundary monitoring. For example, we incorporate early

initial scanning, which is often a preceding observation that occurs

usually in the form of IP sweeps that target a relatively small set of

selected vulnerable ports. We also exclude DNS C&C lookups, which

Rajab et al. [33] include as a consistent precursor to

C&C coordination, because DNS lookups are often locally handled or

made through a designated DNS server via internal packet exchanges

that should not be assumed visible from the egress position. Further,

we exclude local host modifications and internal network propagation

because these are also events that are not assumed to be visible from

the egress point. Finally, we include internal-to-external attack

propagation, which Rajab et al. [33] exclude. While

our model is currently targeted for passive network monitoring

events, it will be straightforward to include localhost-based or

DNS-server-based IDSs that can augment our dialog model.

Figure 2 is not intended to provide a strict ordering of

events, but rather to capture a typical infection dialog (exceptions to

which we discuss below). In the idealized sequence of a

direct-exploit bot infection dialog, the bot infection begins with an

external-to-internal communication flow that may encompass bot

scanning (E1) or a direct inbound exploit (E2). When an internal host

has been successfully compromised (we observe that many compromise attempts

regularly end with process dumps or system freezes), the newly

compromised host downloads and instantiates a full malicious

binary instance of the bot (E3). Once the full binary instance of the

bot is retrieved and executed, our model accommodates two potential

dialog paths, which Rajab et al. [33] refer to as the bot

Type I versus Type II split. Under Type II bots, the infected host

proceeds to C&C server coordination (E4) before attempting

self-propagation. Under a Type I bot, the infected host

immediately moves to outbound scanning and attack propagation (E5),

representing a classic worm infection.

We assume that bot dialog sequence analysis must be robust to the

absence of some dialog events, must allow for multiple contributing

candidates for each of the various dialog phases, and must not require

strict sequencing on the order in which outbound dialog is conducted.

Furthermore, in practice we have observed that for Type II infections,

time delays between the initial infection events (E1 and E2) and

subsequent outbound dialog events (E3, E4, and E5) can be

significant-on the order of several hours. Furthermore, our model

must be robust to failed E1 and E2 detections, possibly due to

insufficient IDS fidelity or due to malware infections that occur

through avenues other than direct remote exploit.

One approach to addressing the challenges of sequence order and event

omission is to use a weighted event threshold system that captures the

minimum necessary and sufficient sparse sequences of events under

which bot profile declarations can be triggered. For example, one can

define a weighting and threshold scheme for the appearance of each

event such that a minimum set of event combinations is required

before bot detection. In our case, we assert that bot infection

declaration requires a minimum of

Condition 1: Evidence of local host infection (E2), AND evidence

of outward bot coordination or attack propagation (E3-E5); or

Condition 2: At least two distinct signs of outward bot

coordination or attack propagation (E3-E5).

In our description of the BotHunter correlation engine in

Section 4, we discuss a weighted event threshold scheme that

enforces the above minimum requirement for bot declaration.

4 BotHunter: System Design

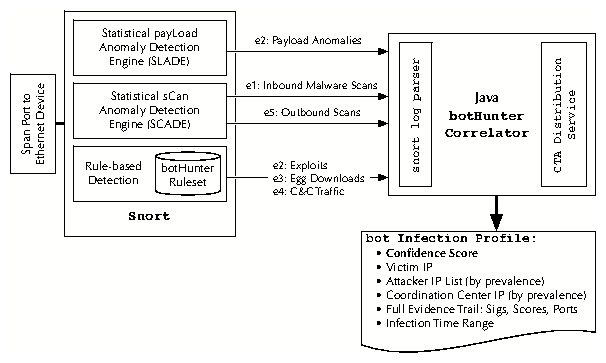

We now turn our attention to the design of a passive monitoring system

capable of recognizing the bidirectional warning signs of local host

infections, and correlating this evidence against our dialog infection

model. Our system, referred to as BotHunter, is composed of a trio of

IDS components that monitor in- and out-bound traffic flows, coupled

with our dialog correlation engine that produces consolidated pictures

of successful bot infections. We envision BotHunter to be located at

the boundary of a network, providing it a vantage point to observe the

network communication flows that occur between the network's internal

hosts and the Internet. Figure 3 illustrates the

components within the BotHunter package.

Figure 3: BotHunter System Architecture

Our IDS detection capabilities are composed on top of the open source

release of Snort [35]. We take full advantage of Snort's

signature engine, incorporating an extensive set of malware-specific signatures

that we developed internally or compiled from the highly active Snort

community (e.g., [10] among other sources). The signature

engine enables us to produce dialog warnings for inbound exploit

usage, egg downloading, and C&C patterns, as discussed in

Section 4.1.3. In addition, we have

developed two custom plugins that complement the Snort

signature engine's ability to produce certain dialog warnings. Note

that we refer to the various IDS alarms as dialog warnings

because we do not intend the individual alerts to be processed by

administrators in search of bot or worm activity. Rather, we use the

alerts produced by our sensors as input to drive a bot dialog

correlation analysis, the results of which are intended to capture and

report the actors and evidence trail of a complete bot infection

sequence.

Our two custom BotHunter plugins are called SCADE and SLADE. SCADE,

discussed in Section 4.1.1, provides inbound and outbound scan detection

warnings that are weighted for sensitivity toward malware-specific

scanning patterns. SLADE, discussed in Section 4.1.2,

conducts a byte-distribution payload anomaly detection of inbound

packets, providing a complementary non-signature approach in inbound

exploit detection.

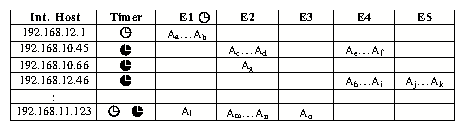

Our BotHunter correlator is charged with maintaining an assessment of

all dialog exchanges, as seen through our sensor dialog warnings,

between all local hosts communicating with external entities across

the Internet. The BotHunter correlator manages the state of all

dialog warnings produced per local host in a data structure we refer

to as the network dialog correlation matrix

(Figure 4). Evidence of local host infection is

evaluated and expired from our correlator until a sufficient

combination of dialog warnings (E1-E5) crosses a weighted

threshold. When the bot infection threshold is crossed for a given

host, we produce a bot infection profile (illustrated in

Figure 7).

Finally, our correlator also incorporates a module that allows users to

report bot infection profiles to a remote repository for global collection

and evaluation of bot activity. For this purpose, we utilize the Cyber-TA

privacy-enabled alert delivery infrastructure [32]. Our delivery

infrastructure first anonymizes all source-local addresses reported within the

bot infection profile, and then delivers the profile to our data repository

through a TLS over TOR [15] (onion routing protocol) network connection.

These profiles will be made available to the research community, ideally to

help in the large-scale assessment of bot dialog behavior, the sources and

volume of various bot infections, and for surveying where C&C servers

and exploit sources are located.

Figure 3: BotHunter System Architecture

Our IDS detection capabilities are composed on top of the open source

release of Snort [35]. We take full advantage of Snort's

signature engine, incorporating an extensive set of malware-specific signatures

that we developed internally or compiled from the highly active Snort

community (e.g., [10] among other sources). The signature

engine enables us to produce dialog warnings for inbound exploit

usage, egg downloading, and C&C patterns, as discussed in

Section 4.1.3. In addition, we have

developed two custom plugins that complement the Snort

signature engine's ability to produce certain dialog warnings. Note

that we refer to the various IDS alarms as dialog warnings

because we do not intend the individual alerts to be processed by

administrators in search of bot or worm activity. Rather, we use the

alerts produced by our sensors as input to drive a bot dialog

correlation analysis, the results of which are intended to capture and

report the actors and evidence trail of a complete bot infection

sequence.

Our two custom BotHunter plugins are called SCADE and SLADE. SCADE,

discussed in Section 4.1.1, provides inbound and outbound scan detection

warnings that are weighted for sensitivity toward malware-specific

scanning patterns. SLADE, discussed in Section 4.1.2,

conducts a byte-distribution payload anomaly detection of inbound

packets, providing a complementary non-signature approach in inbound

exploit detection.

Our BotHunter correlator is charged with maintaining an assessment of

all dialog exchanges, as seen through our sensor dialog warnings,

between all local hosts communicating with external entities across

the Internet. The BotHunter correlator manages the state of all

dialog warnings produced per local host in a data structure we refer

to as the network dialog correlation matrix

(Figure 4). Evidence of local host infection is

evaluated and expired from our correlator until a sufficient

combination of dialog warnings (E1-E5) crosses a weighted

threshold. When the bot infection threshold is crossed for a given

host, we produce a bot infection profile (illustrated in

Figure 7).

Finally, our correlator also incorporates a module that allows users to

report bot infection profiles to a remote repository for global collection

and evaluation of bot activity. For this purpose, we utilize the Cyber-TA

privacy-enabled alert delivery infrastructure [32]. Our delivery

infrastructure first anonymizes all source-local addresses reported within the

bot infection profile, and then delivers the profile to our data repository

through a TLS over TOR [15] (onion routing protocol) network connection.

These profiles will be made available to the research community, ideally to

help in the large-scale assessment of bot dialog behavior, the sources and

volume of various bot infections, and for surveying where C&C servers

and exploit sources are located.

4.1 A Multiple-Sensor Approach to Gathering Infection Evidence

4.1.1 SCADE: Statistical sCan Anomaly Detection Engine

Recent measurement studies suggest that modern bots are packaged with

around 15 exploit vectors on average [33] to improve

opportunities for exploitation. Depending on how the attack source

scans its target, we are likely to encounter some failed connection attempts

prior to a successful infection.

To address this form aspect of malware interaction, we have designed

SCADE, a Snort preprocessor plug-in with two modules, one for inbound

scan detection (E1 dialog warnings) and another for detecting outbound

attack propagations (E5 dialog warnings) once our local system is

infected. SCADE E1 alarms provide a potential early bound on the

start of an infection, should this scan eventually lead to a

successful infection.

Inbound Scan Detection: SCADE is similar in principle to

existing scan detection techniques like

[35,24]. However, SCADE has been

specifically weighted toward the detection of scans involving the

ports often used by malware. It is also less vulnerable to DoS

attacks because its memory trackers do not maintain per-source-IP

state. Similar to the scan detection technique proposed

in [48], SCADE tracks only scans that are specifically

targeted to internal hosts, bounding its memory usage to the number of

inside hosts. SCADE also bases its E1 scan detection on failed

connection attempts, further narrowing its processing. We define two

types of ports:

HS (high-severity) ports representing highly vulnerable and

commonly exploited services (e.g., 80/HTTP, 135,1025/DCOM,

445/NetBIOS, 5000/UPNP, 3127/MyDoom) and LS (low-severity)

ports.2 Currently, we define

26 TCP and 4 UDP HS ports and mark all others as

LS ports. We set different weights to a failed scan attempt to

different types of ports. An E1 dialog warning for a local host is

produced based on an anomaly score that is calculated as s = w1Fhs+w2Fls, where Fhs and Fls indicate numbers of

cumulative failed attempts at high-severity and low-severity ports,

respectively.

Outbound Scan Detection: SCADE's outbound scan detection coverage

for E5 dialog warnings is based on a voting scheme (AND, OR or MAJORITY)

of three parallel anomaly detection models that track all

external outbound connections per internal host:

- Outbound scan rate (s1): Detects local hosts that

conduct high-rate scans across large sets of external addresses.

- Outbound connection failure rate (s2): Detects abnormally high

connection fail rates, with sensitivity to HS port usage. We

calculate the anomaly score s2=(w1Fhs+w2Fls)/C, where C is

the total number of scans from the host within a time window.

- Normalized entropy of scan target distribution (s3):

Calculates a Zipf (power-law) distribution of outbound address

connection patterns [3]. A uniformly distributed

scan target pattern provides an indication of a potential outbound

scan. We use an anomaly scoring technique based on normalized

entropy to identify such candidates: s3 = H/ln(m), where

the entropy of scan target distribution is H=-

i=1mpiln(pi), m is the total number of scan targets, and pi is

the percentage of the scans at target i. i=1mpiln(pi), m is the total number of scan targets, and pi is

the percentage of the scans at target i.

Each anomaly module issues a subalert when si ti, where ti

is a threshold. SCADE then uses a user-configurable "voting

scheme", i.e., AND, OR, or MAJORITY, to combine the alerts from the

three modules. For example, the AND rule dictates that SCADE issues an

alert when all three modules issue an alert. ti, where ti

is a threshold. SCADE then uses a user-configurable "voting

scheme", i.e., AND, OR, or MAJORITY, to combine the alerts from the

three modules. For example, the AND rule dictates that SCADE issues an

alert when all three modules issue an alert.

4.1.2 SLADE: Statistical PayLoad Anomaly

Detection Engine

SLADE is an anomaly-based engine for payload exploit detection.

It examines the payload of every request packet sent to monitored

services and outputs an alert if its lossy n-gram frequency

deviates from an established normal profile.

SLADE is similar to PAYL [46], which is a payload-based

1-gram byte distribution anomaly detection scheme. PAYL examines the

1-gram byte distribution of the packet payload, i.e., it extracts 256

features each representing the occurrence frequency of one of the 256

possible byte values in the payload. A normal profile for a

service/port, e.g., HTTP, is constructed by calculating the average

and standard deviation of the feature vector of the normal traffic to

the port. PAYL calculates deviation distance of a test payload from

the normal profile using a simplified Mahalanobis distance,

d(x,y)= i=0255 (|xi-yi|)/(si+a), where yi

is the mean, si is the standard deviation, and a is a

smoothing factor. A payload is considered as anomalous if this

distance exceeds a predetermined threshold. PAYL is effective in

detecting worm exploits with a reasonable false positive rate as shown

in [46,47]. However, it could be evaded by a

polymorphic blending attack (PBA) [18]. As discussed

in [47,18,31], a generic

n-gram version of PAYL may help to improve accuracy and the hardness

of evasion. The n-grams extract n-byte sequence information from the

payload, which helps in constructing a more precise model of the

normal traffic compared to the single-byte (i.e., 1-gram)

frequency-based model. In this case the feature space in use is not

256, but 256n dimensional.

It is impractical to store and compute in a 256n dimension

space for high-n-grams.

SLADE makes the n-gram scheme practical by using a lossy structure

while still maintaining approximately the same accuracy as the

original full n-gram version. We use a fixed vector counter (with size

v) to store a lossy n-gram distribution of the payload. When

processing a payload, we sequentially scan n-gram substring str,

apply some universal hash function h(), and increment the counter at

the vector space indexed by h(str) mod v. We then calculate the

distribution of the hashed n-gram indices within this (much) smaller

vector space v. We define F as the feature space of

n-gram PAYL (with a total of 256n distinct features), and

F' as the feature space of SLADE (with v

features).

This hash function provides a mapping from F to

F' that we utilize for space efficiency. We require only

v (e.g., v=2,000), whereas n-gram PAYL needs 256n (e.g., even for

a small n=3, 2563=224 i=0255 (|xi-yi|)/(si+a), where yi

is the mean, si is the standard deviation, and a is a

smoothing factor. A payload is considered as anomalous if this

distance exceeds a predetermined threshold. PAYL is effective in

detecting worm exploits with a reasonable false positive rate as shown

in [46,47]. However, it could be evaded by a

polymorphic blending attack (PBA) [18]. As discussed

in [47,18,31], a generic

n-gram version of PAYL may help to improve accuracy and the hardness

of evasion. The n-grams extract n-byte sequence information from the

payload, which helps in constructing a more precise model of the

normal traffic compared to the single-byte (i.e., 1-gram)

frequency-based model. In this case the feature space in use is not

256, but 256n dimensional.

It is impractical to store and compute in a 256n dimension

space for high-n-grams.

SLADE makes the n-gram scheme practical by using a lossy structure

while still maintaining approximately the same accuracy as the

original full n-gram version. We use a fixed vector counter (with size

v) to store a lossy n-gram distribution of the payload. When

processing a payload, we sequentially scan n-gram substring str,

apply some universal hash function h(), and increment the counter at

the vector space indexed by h(str) mod v. We then calculate the

distribution of the hashed n-gram indices within this (much) smaller

vector space v. We define F as the feature space of

n-gram PAYL (with a total of 256n distinct features), and

F' as the feature space of SLADE (with v

features).

This hash function provides a mapping from F to

F' that we utilize for space efficiency. We require only

v (e.g., v=2,000), whereas n-gram PAYL needs 256n (e.g., even for

a small n=3, 2563=224 16M). The computational complexity

in examining each payload is still linear (O(L), where L is the

length of payload), and the complexity in calculating distance is

O(v) instead of 256n. Thus, the runtime performance of SLADE is

comparable to 1-gram PAYL. Also note that although both use hashing

techniques, SLADE is different from Anagram [45],

which uses a Bloom filter to store all n-gram substrings from normal

payloads. The hash function in SLADE is for feature compression and reduction, however the hash functions in Anagram are to reduce the false positives of string lookup in Bloom filter.

In essence, Anagram is like a content matching scheme. It builds a huge knowledge base of all

known good n-gram substrings using efficient storage and query

optimizations provided by bloom filters, and examines a payload to determine

whether the number of its n-gram substrings not in the

knowledge base exceeds a threshold.

A natural concern of using such a lossy data structure is the issue of

accuracy: how many errors (false positives and false negatives)

may be introduced because of the lossy representation? To answer this

question, we perform the following simple analysis.3 Let us

first overview the reason why the original n-gram PAYL can detect

anomalies. We use g to represent the number of non-zero value

features in F for a normal profile used by

PAYL. Similarly, g' is the number of non-zero value features in

F' for a normal profile used by SLADE. For a normal

payload of length=L, there is a total of l=(L-n+1) n-gram

substrings. Among these l substrings, 1-bn percent substrings

converge to g distinct features in the normal profile, i.e.,

these substrings share similar distributions as the normal

profile. The remaining (small portion) bn percent of substrings

are considered as noise substrings that do not belong to the g

features in the normal profile. For a malicious payload, if it can be

detected as an anomaly, it should have a much larger portion of

noise substrings ba (ba > bn).

We first analyze the false positives when using the lossy structure

representation to see how likely SLADE will detect a normal

(considered normal by n-gram PAYL) payload as anomalous. For a normal

payload, the hashed indices of a 1-bn portion of substrings (that

converge to g distinct features in F for the normal

profile of PAYL) should now converge in the new vector space (into

g' distinct features in F' for the normal profile of

SLADE). Because of the universal hash function, hashed indices of the

bn portion of noise substrings are most likely uniformly distributed

into F'. As a result, some of the original noise

substrings may actually be hashed to the g' distinct features

in the normal profile of SLADE (i.e., they may not be noise in the new

feature space now). Thus, the deviation distance (i.e., the anomaly

score) can only decrease in SLADE. Hence, we conclude that SLADE

may not have a higher false positive rate than n-gram PAYL.

Now let us analyze the false negative rate, i.e., the likelihood

that SLADE will treat a malicious payload (as would be detected by

n-gram PAYL) as normal. False negatives happen when the hash

collisions in the lossy structure mistakenly map a ba portion

of noise substrings into the g' features (i.e., the

normal profile) for SLADE. By using the universal hash function, the

probability for a noise substring to fall into g' out of v

space is [(g')/v]. Thus, the probability for all the

lba noise substrings to collide into the g' portion is

about ([(g')/v])lba. For example, if we assume

v=2,000, g'=200, lba=100, then this probability is about

(200/2000)100=1e-100 16M). The computational complexity

in examining each payload is still linear (O(L), where L is the

length of payload), and the complexity in calculating distance is

O(v) instead of 256n. Thus, the runtime performance of SLADE is

comparable to 1-gram PAYL. Also note that although both use hashing

techniques, SLADE is different from Anagram [45],

which uses a Bloom filter to store all n-gram substrings from normal

payloads. The hash function in SLADE is for feature compression and reduction, however the hash functions in Anagram are to reduce the false positives of string lookup in Bloom filter.

In essence, Anagram is like a content matching scheme. It builds a huge knowledge base of all

known good n-gram substrings using efficient storage and query

optimizations provided by bloom filters, and examines a payload to determine

whether the number of its n-gram substrings not in the

knowledge base exceeds a threshold.

A natural concern of using such a lossy data structure is the issue of

accuracy: how many errors (false positives and false negatives)

may be introduced because of the lossy representation? To answer this

question, we perform the following simple analysis.3 Let us

first overview the reason why the original n-gram PAYL can detect

anomalies. We use g to represent the number of non-zero value

features in F for a normal profile used by

PAYL. Similarly, g' is the number of non-zero value features in

F' for a normal profile used by SLADE. For a normal

payload of length=L, there is a total of l=(L-n+1) n-gram

substrings. Among these l substrings, 1-bn percent substrings

converge to g distinct features in the normal profile, i.e.,

these substrings share similar distributions as the normal

profile. The remaining (small portion) bn percent of substrings

are considered as noise substrings that do not belong to the g

features in the normal profile. For a malicious payload, if it can be

detected as an anomaly, it should have a much larger portion of

noise substrings ba (ba > bn).

We first analyze the false positives when using the lossy structure

representation to see how likely SLADE will detect a normal

(considered normal by n-gram PAYL) payload as anomalous. For a normal

payload, the hashed indices of a 1-bn portion of substrings (that

converge to g distinct features in F for the normal

profile of PAYL) should now converge in the new vector space (into

g' distinct features in F' for the normal profile of

SLADE). Because of the universal hash function, hashed indices of the

bn portion of noise substrings are most likely uniformly distributed

into F'. As a result, some of the original noise

substrings may actually be hashed to the g' distinct features

in the normal profile of SLADE (i.e., they may not be noise in the new

feature space now). Thus, the deviation distance (i.e., the anomaly

score) can only decrease in SLADE. Hence, we conclude that SLADE

may not have a higher false positive rate than n-gram PAYL.

Now let us analyze the false negative rate, i.e., the likelihood

that SLADE will treat a malicious payload (as would be detected by

n-gram PAYL) as normal. False negatives happen when the hash

collisions in the lossy structure mistakenly map a ba portion

of noise substrings into the g' features (i.e., the

normal profile) for SLADE. By using the universal hash function, the

probability for a noise substring to fall into g' out of v

space is [(g')/v]. Thus, the probability for all the

lba noise substrings to collide into the g' portion is

about ([(g')/v])lba. For example, if we assume

v=2,000, g'=200, lba=100, then this probability is about

(200/2000)100=1e-100

0. In practice, the probability of

such collisions for partial noise substrings is negligible.

Thus, we believe that SLADE does not incur a significant

accuracy penalty compared to full n-gram PAYL, while significantly

reducing its storage and computation complexity.

We measured the performance of SLADE

in comparison to 1-gram PAYL by using the same data set as in

[31]. The training and test data sets

used were from the first and following four days of HTTP requests from the

Georgia Tech campus network, respectively. The attack data consists of

18 HTTP-based buffer overflow attacks, including 11 regular

(nonpolymorphic) exploits, 6 mimicry exploits generated by CLET, and 1

polymorphic blending attack used in [18] to evade 2-gram

PAYL. In our experiment, we set n=4,v=2,048.4

Table 1 summarizes our experimental results. Here,

DFP is the desired false positive rate, i.e., the rejection rate

in the training set. RFP is the "real" false positive rate in our

test data set. The detection rate is measured on the attack data set

and is defined as the

number of attack packets classified as anomalous divided by the

total number of packets in the attack instances. We

conclude from the results that SLADE performs better with respect to both

DFP and RFP than the original PAYL (1-gram) system. Furthermore, we

discovered that the minimum RFP for which PAYL is able to detect all attacks,

including the polymorphic blending attack, is 4.02%. This is usually

considered intolerably high for network intrusion detection. On the

other hand, the minimum RFP required for SLADE to detect all attacks

is 0.3601%. As shown in [31], 2-gram

PAYL does not detect the polymorphic blending attack even if we are

willing to tolerate an RFP as high as 11.25%. This is not surprising

given that the polymorphic blending attack we used was specifically

tailored to evade 2-gram PAYL. We also find that SLADE is comparable

to (or even better than) a well-constructed ensemble IDS that combines

11 one-class SVM classifiers [31],

and detects all the attacks, including the polymorphic blending attack,

for an RFP at around 0.49%. SLADE also has the added advantage of

more efficient resource utilization, which results in shorter

training and execution times when compared to the ensemble IDS.

Table 1: Performance of 1-gram PAYL and SLADE 0. In practice, the probability of

such collisions for partial noise substrings is negligible.

Thus, we believe that SLADE does not incur a significant

accuracy penalty compared to full n-gram PAYL, while significantly

reducing its storage and computation complexity.

We measured the performance of SLADE

in comparison to 1-gram PAYL by using the same data set as in

[31]. The training and test data sets

used were from the first and following four days of HTTP requests from the

Georgia Tech campus network, respectively. The attack data consists of

18 HTTP-based buffer overflow attacks, including 11 regular

(nonpolymorphic) exploits, 6 mimicry exploits generated by CLET, and 1

polymorphic blending attack used in [18] to evade 2-gram

PAYL. In our experiment, we set n=4,v=2,048.4

Table 1 summarizes our experimental results. Here,

DFP is the desired false positive rate, i.e., the rejection rate

in the training set. RFP is the "real" false positive rate in our

test data set. The detection rate is measured on the attack data set

and is defined as the

number of attack packets classified as anomalous divided by the

total number of packets in the attack instances. We

conclude from the results that SLADE performs better with respect to both

DFP and RFP than the original PAYL (1-gram) system. Furthermore, we

discovered that the minimum RFP for which PAYL is able to detect all attacks,

including the polymorphic blending attack, is 4.02%. This is usually

considered intolerably high for network intrusion detection. On the

other hand, the minimum RFP required for SLADE to detect all attacks

is 0.3601%. As shown in [31], 2-gram

PAYL does not detect the polymorphic blending attack even if we are

willing to tolerate an RFP as high as 11.25%. This is not surprising

given that the polymorphic blending attack we used was specifically

tailored to evade 2-gram PAYL. We also find that SLADE is comparable

to (or even better than) a well-constructed ensemble IDS that combines

11 one-class SVM classifiers [31],

and detects all the attacks, including the polymorphic blending attack,

for an RFP at around 0.49%. SLADE also has the added advantage of

more efficient resource utilization, which results in shorter

training and execution times when compared to the ensemble IDS.

Table 1: Performance of 1-gram PAYL and SLADE

| DFP(%) | 0.0 | 0.01 | 0.1 | 1.0 | 2.0 | 5.0 | 10.0 |

| PAYL | RFP(%) | 0.00022 | 0.01451 | 0.15275 | 0.92694 | 1.86263 | 5.69681 | 11.05049 |

| Detected Attacks | 1 | 4 | 17 | 17 | 17 | 18 | 18 |

| Detection Rate(%) | 0.8 | 17.5 | 69.1 | 72.2 | 72.2 | 73.8 | 78.6 |

| SLADE | RFP(%) | 0.0026 | 0.0189 | 0.2839 | 1.9987 | 3.3335 | 6.3064 | 11.0698 |

| Detected Attacks | 3 | 13 | 17 | 18 | 18 | 18 | 18 |

| Detection Rate(%) | 20.6 | 74.6 | 92.9 | 99.2 | 99.2 | 99.2 | 99.2 |

4.1.3 Signature Engine: Bot-Specific Heuristics

Our final sensor contributor is the Snort signature engine. This

module plays a significant role in detecting several of the classes of

dialog warnings from our bot infection dialog model. Snort is our

second sensor source for direct exploit detection (class E2), and our

primary source for binary downloading (E3) and C&C communications

(E4). We organize the rules selected for BotHunter into four separate

rule files, covering 1046 E2 rules, 71 E3 rules, 246 E4 rules, and a

small collection of 20 E5 rules, for total of 1383 heuristics. The

rules are primarily derived from the Bleeding-Edge [10] and

SourceFire's registered free rulesets.

All the rulesets were selected specifically for their relevance to

malware identification. Our rule selections are continually tested

and reviewed across operational networks and our live honeynet

environment. It is typical for our rule-based heuristics to produce

less than 300 dialog warnings per 10-day period monitoring an

operational border switch space port of approximately 130 operational

hosts (SRI Computer Science Laboratory).

Our E2 ruleset focuses on the full spectrum of external to internal

exploit injection attacks, and has been tested and augmented with

rules derived from experimentation in our medium and high interactive

honeynet environment, where we can observe and validate live malware

infection attempts. Our E3 rules focus on (malware)

executable download events from external sites to internal networks,

covering as many indications of (malicious) binary executable downloads

and download acknowledgment events as are in the publicly

available Snort rulesets. Our E4 rules cover internally-initiated bot

command and control dialog, and acknowledgment exchanges, with a

significant emphasis on IRC and URL-based bot coordination.5 Also covered are

commonly used Trojan backdoor communications, and popular bot commands

built by keyword searching across common major bot families and their

variants. A small set of E5 rules is also incorporated to detect

well-known internal to external backdoor sweeps, while SCADE provides

the more in-depth hunt for general outbound port scanning.

4.2 Dialog-Based IDS Correlation Engine

The BotHunter correlator tracks the sequences of

IDS dialog warnings that occur between each local host and those

external entities involved in these dialog exchanges. Dialog warnings

are tracked over a temporal window, where each contributes to an

overall infection sequence score that is maintained per local host.

We introduce a data structure called the network dialog

correlation matrix, which is managed, pruned, and evaluated by our

correlation engine at each dialog warning insertion point. Our correlator

employs a weighted threshold scoring function that aggregates the

weighted scores of each dialog warning, declaring a local host

infected when a minimum combination of dialog transactions

occur within our temporal pruning interval.

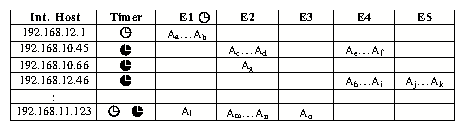

Figure 4 illustrates the structure of our

network dialog correlation matrix. Each dynamically-allocated row

corresponds to a summary of the ongoing dialog warnings that are

raised between an individual local host and other external entities.

The BotHunter correlator manages the five classes of dialog warnings

presented in Section 3 (E1 through E5), and each event cell

corresponds to one or more (possibly aggregated) sensor alerts that

map into one of these five dialog warning classes. This correlation

matrix dynamically grows when new activity involving a local host is

detected, and shrinks when the observation window reaches an interval

expiration.

Figure 4: BotHunter Network Dialog Correlation Matrix

In managing the dialog transaction history we employ an interval-based

pruning algorithm to remove old dialog from the matrix. In

Figure 4, each dialog may have one or two

expiration intervals, corresponding to a soft prune timer (the

open-faced clocks) and a hard prune timer (the filled

clocks). The hard prune interval represents a fixed temporal interval

over which dialog warnings are allowed to aggregate, and the end of

which results in the calculation of our threshold score. The soft

prune interval represents a smaller temporal window that allows users

to configure tighter pruning interval requirements for high-production

dialog warnings (inbound scan warnings are expired more

quickly by the soft prune interval), while the others are allowed to

accumulate through the hard prune interval. If a dialog warning

expires solely because of a soft prune timer, the dialog is summarily

discarded for lack of sufficient evidence (an example is row 1 in

Figure 4 where only E1 has alarms). However, if a

dialog expires because of a hard prune timer, the dialog threshold score

is evaluated, leading either to a bot declaration or to the complete

removal of the dialog trace should the threshold score be found

insufficient.

To declare that a local host is infected, BotHunter must compute a

sufficient and minimum threshold of evidence (as defined in Section 3)

within its pruning interval. BotHunter employs two potential criteria

required for bot declaration: 1) an incoming infection warning (E2)

followed by outbound local host coordination or exploit propagation

warnings (E3-E5), or 2) a minimum of at least two forms of outbound

bot dialog warnings (E3-E5). To translate these requirements into a

scoring algorithm we employ a regression model to estimate dialog

warning weights and a threshold value, and then test our values

against a corpus of malware infection traces. We define an

expectation table of predictor variables that match our conditions

and apply a regression model where the estimated regression

coefficients are the desired weights shown in

Table 2. For completeness, the computed

expectation table is provided in the project

website [1].

Figure 4: BotHunter Network Dialog Correlation Matrix

In managing the dialog transaction history we employ an interval-based

pruning algorithm to remove old dialog from the matrix. In

Figure 4, each dialog may have one or two

expiration intervals, corresponding to a soft prune timer (the

open-faced clocks) and a hard prune timer (the filled

clocks). The hard prune interval represents a fixed temporal interval

over which dialog warnings are allowed to aggregate, and the end of

which results in the calculation of our threshold score. The soft

prune interval represents a smaller temporal window that allows users

to configure tighter pruning interval requirements for high-production

dialog warnings (inbound scan warnings are expired more

quickly by the soft prune interval), while the others are allowed to

accumulate through the hard prune interval. If a dialog warning

expires solely because of a soft prune timer, the dialog is summarily

discarded for lack of sufficient evidence (an example is row 1 in

Figure 4 where only E1 has alarms). However, if a

dialog expires because of a hard prune timer, the dialog threshold score

is evaluated, leading either to a bot declaration or to the complete

removal of the dialog trace should the threshold score be found

insufficient.

To declare that a local host is infected, BotHunter must compute a

sufficient and minimum threshold of evidence (as defined in Section 3)

within its pruning interval. BotHunter employs two potential criteria

required for bot declaration: 1) an incoming infection warning (E2)

followed by outbound local host coordination or exploit propagation

warnings (E3-E5), or 2) a minimum of at least two forms of outbound

bot dialog warnings (E3-E5). To translate these requirements into a

scoring algorithm we employ a regression model to estimate dialog

warning weights and a threshold value, and then test our values

against a corpus of malware infection traces. We define an

expectation table of predictor variables that match our conditions

and apply a regression model where the estimated regression

coefficients are the desired weights shown in

Table 2. For completeness, the computed

expectation table is provided in the project

website [1].

| Coefficients | Standard Error |

| E1 | 0.09375 | 0.100518632 |

| E2 rulebase | 0.28125 | 0.075984943 |

| E2 slade | 0.09375 | 0.075984943 |

| E3 | 0.34375 | 0.075984943 |

| E4 | 0.34375 | 0.075984943 |

| E5 | 0.34375 | 0.075984943 |

Table 2:

Initial Weighting

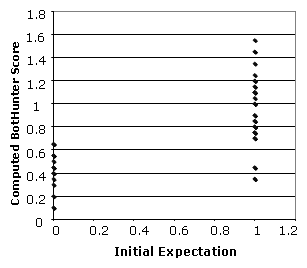

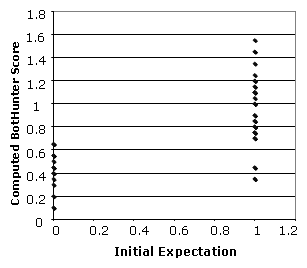

These coefficients provide an approximate weighting system to match

the initial expectation table 6. We apply these values to our expectation table data

to establish a threshold between bot and no-bot declaration.

Figure 5 illustrates our results,

where bot patterns are at X-axis value 1, and non-bot patterns are at

X-axis 0. Bot scores are plotted vertically on the Y-axis. We observe

that all but one non-bot patterns score below 0.6, and all but 2 bot

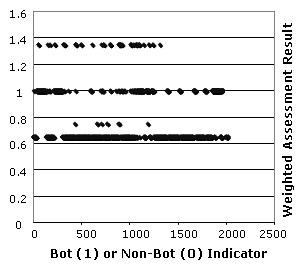

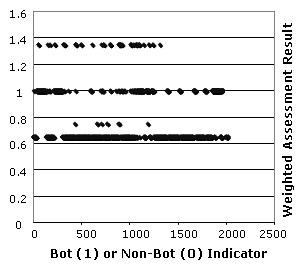

patterns score above 0.65. Next, we examine our scoring model against

a corpus of BotHunter IDS warning sets produced from successful bot

and worm infections captured in the SRI honeynet between March and

April 2007. Figure 6 plots the

actual bot scores produced from these real bot infection traces. All

observations produce BotHunter scores of 0.65 or greater.

Figure 5: Scoring Plot from Expectation Table

Figure 5: Scoring Plot from Expectation Table

Figure 6: Scoring Plot: 2019 Real Bot Infections

When a dialog sequence is found to cross the threshold for bot

declaration, BotHunter produces a bot profile. The bot profile

represents a full analysis of roles of the dialog participants,

summarizes the dialog alarms based on which dialog classes (E1-E5) the

alarms map, and computes the infection time interval.

Figure 7 (right) provides an example of a bot

profile produced by the BotHunter correlation engine. The bot profile

begins with an overall dialog anomaly score, followed by the IP

address of the infected target (the victim machine), infector list,

and possible C&C server. Then it outputs the dialog observation

time and reporting time. The raw alerts specific to this dialog are

listed in an organized (E1-E5) way and provide some detailed

information.

Figure 6: Scoring Plot: 2019 Real Bot Infections

When a dialog sequence is found to cross the threshold for bot

declaration, BotHunter produces a bot profile. The bot profile

represents a full analysis of roles of the dialog participants,

summarizes the dialog alarms based on which dialog classes (E1-E5) the

alarms map, and computes the infection time interval.

Figure 7 (right) provides an example of a bot

profile produced by the BotHunter correlation engine. The bot profile

begins with an overall dialog anomaly score, followed by the IP

address of the infected target (the victim machine), infector list,

and possible C&C server. Then it outputs the dialog observation

time and reporting time. The raw alerts specific to this dialog are

listed in an organized (E1-E5) way and provide some detailed

information.

5 Evaluating Detection Performance

To evaluate BotHunter's performance, we conducted several controlled

experiments as well as real world deployment evaluations. We begin

this section with a discussion of our detection performance while

exposing BotHunter to infections from a wide variety of bot families

using in situ virtual network experiments. We then discuss a

larger set of true positive and false negative results while deploying

BotHunter to a live VMWare-based high-interaction honeynet. This

recent experiment exposed BotHunter to 2,019 instances of Windows XP

and Windows 2000 direct-exploit malware infections from the Internet.

We follow these controlled experiments with a brief discussion of an

example detection experience using BotHunter during a live operational

deployment.

Next, we discuss our broader testing experiences in

two network environments. Here, our focus is on understanding BotHunter's

daily false positive (FP) performance, at least in the context of two

significantly different operational environments. A false positive in this

context refers to the generation of a bot profile in response to a

non-infection traffic flow, not to the number of IDS dialog warnings produced

by the BotHunter sensors. As stated previously, network administrators are not

expected to analyze individual IDS alarms. Indeed, we anticipate

external entities to regularly probe and attack our networks,

producing a regular flow of dialog warnings. Rather, we assert (and validate)

that the dialog combinations necessary to cause a bot detection should be rarely

encountered in normal operations.

5.1 Experiments in an In situ Virtual Network

Our evaluation setup uses a virtual network environment of three

VMware guest systems. The first is a Linux machine with IRC server

installed, which is used as the C&C server, and the other two are

Windows 2000 instances. We infect one of the Windows instances and

wait for it to connect to our C&C server. Upon connection

establishment, we instruct the bot to start scanning and infecting

neighboring hosts. We then await the infection and IRC C&C channel

join by the second Windows instance. By monitoring the network

activity of the second victim, we capture the full infection

dialog. This methodology provides a useful means to measure the false

negative performance of BotHunter.

We collected 10 different bot variants from three of the most

well-known IRC-based bot families [20]:

Agobot/Gaobot/Phatbot, SDBot/RBot/UrBot/UrXBot, and the

mIRC-based GTbot. We then ran BotHunter in this virtual network and

limited its correlation focus on the victim machine (essentially we

assume the HOMENET is the victim's IP). BotHunter successfully

detected all bot infections (and produced bot profiles for all).

We summarize our measurement results for this virtual network

infection experiment in Table 3. We use Yes or

No to indicate whether a certain dialog warning is reported in the final

profile. The two numbers within brackets are the number of generated

dialog warnings in the whole virtual network and the number involving our

victim, respectively. For example, for Phatbot-rls, 2,834

dialog warnings are generated by E2[rb] ([rb] means Snort rule base, [sl] means

SLADE), but only 46 are relevant to our bot infection victim. Observe

that although many warnings are generated by the sensors, only one bot

profile is generated for this infection. This shows that BotHunter

can significantly reduce the amount of information a security

administrator needs to analyze. In our

experiments almost all sensors worked as we expected. We do not see

E1 events for RBot because the RBot family does not provide any

commands to trigger a vertical scan for all infection vectors (such as

the "scan.startall" command provided by the Agobot/Phatbot family). The bot

master must indicate a specific infection vector and

port for each scan. We set our initial infection vector to DCOM, and

since this was successful the attacking host did not attempt further

exploits.

Table 3:

Dialog Summary of Virtual Network Infections

| E1 | E2[rb] | E2[sl] | E3 | E4 | E5 |

| agobot3-priv4 | Yes(2/2) | Yes(9/8) | Yes(6/6) | Yes(5) | Yes(38/8) | Yes(4/1) |

| phat-alpha5 | Yes(14/4) | Yes(5,785/5,721) | Yes(6/2) | Yes(3/3) | Yes(28/26) | Yes(4/2) |

| phatbot-rls | Yes(11/3) | Yes(2,834/46) | Yes(6/2) | Yes(8/8) | Yes(69/20) | Yes(6/2) |

| rbot0.6.6 | No(0) | Yes(2/1) | Yes(2/1) | Yes(2/2) | Yes(65/24) | Yes(2/1) |

| rxbot7.5 | No(0) | Yes(2/2) | Yes(2/2) | Yes(2/2) | Yes(70/27) | Yes(2/1) |

| rx-asn-2-re-workedv2 | No(0) | Yes(4/3) | Yes(3/2) | Yes(2/2) | Yes(59/18) | Yes(2/1) |

| Rxbot-ak-0.7-Modded.by.Uncanny | No(0) | Yes(3/2) | Yes(3/2) | Yes(2/2) | Yes(73/26) | Yes(2/1) |

| sxtbot6.5 | No(0) | Yes(3/2) | Yes(3/2) | Yes(2/2) | Yes(65/24) | Yes(2/1) |

| Urx-Special-Ed-UltrA-2005 | No(0) | Yes(3/2) | Yes(3/2) | Yes(2/2) | Yes(68/22) | Yes(2/1) |

| gt-with-dcom-profile1 | No(1/0) | Yes(5/3) | Yes(6/2) | No(0) | Yes(221/1) | No(4/0) |

| gt-with-dcom-profile2 | No(1/0) | No(5/0) | No(6/0) | No(0) | Yes(221/44) | Yes(4/2) |

| gt-with-dcom-10min-profile | No(1/0) | Yes(5/3) | Yes(6/3) | No(0) | Yes(221/51) | Yes(4/2) |

Note that two profiles are reported in the gt-with-dcom case. In the

first profile, only E2[rb],E2[sl] and E4 are observed. In profile 2,

E4 and E5 are observed (which is the case where we miss the initial

infection periods). Because this infection scenario is very slow and

lasts longer than our 4-minute correlation time window. Furthermore,

note that we do not have any detected E3 dialog warnings reported for this

infection sequence. Regardless, BotHunter successfully generates an

infection profile. This demonstrates the utility of BotHunter's

evidence-trail-based dialog correlation model. We also reran this

experiment with a 10-minute correlation time window, upon which

BotHunter also reported a single infection profile.

5.2 SRI Honeynet Experiments

Our experimental honeynet framework has three integral components.

The first component Drone manager is a software management

component that is responsible for keeping track of drone availability

and forwarding packets to various VMware instances. The address of

one of the interfaces of this Intel Xeon 3 GHz dual core system is set

to be the static route for the unused /17 network. The other

interface is used for communicating with the high-interaction

honeynet. Packet forwarding is accomplished using network address

translation. One important requirements for this system is to keep

track of infected drone systems and to recycle uninfected systems.

Upon detecting a probable infection (outbound connections), we mark

the drone as "tainted" to avoid reassigning that host to another

source. Tainted drones are saved for manual analysis or automatically

reverted back to previous clean snapshots after a fixed timeout. One

of the interesting observations during our study was that most

infection attempts did not succeed even on completely unpatched

Windows 2000 and Windows XP systems. As a result, a surprisingly

small number of VM instances was sufficient to monitor the sources

contacting the entire /17 network. The second component is the

high-interaction-honeynet system, which is hosted in a

high-performance Intel Xeon 3 GHz dual core, dual CPU system with 8 GB

of memory. For the experiments listed in this paper, we typically ran

the system with 9 Win-XP instances, 14 Windows 2000 instances (with

two different service pack levels), and 3 Linux FC3 instances. The

system was moderately utilized in this load. The final component is

the DNS/DHCP server, which dynamically assigns IP addresses to

VMware instances and also answers DNS queries from these hosts.

Over a 3-week period between March and April 2007, we analyzed a total of

2,019 successful WinXP and Win2K remote-exploit bot or worm infections.

Each malware infection instance succeeded in causing the honeypot

to initiate outbound communications related to the infection. Through

our analysis of these traces using BotHunter sensor logs, we were able to very

reliably observe the malware communications associated with the

remote-to-local network service infection and the malware binary

acquisition (egg download). In many instances we also observed the infected

honeypot proceed to establish C&C communications and attempt to

propagate to other victims in our honeynet. Through some of this

experiment, our DNS service operated unreliably and some C&C coordination

events were not observed due to DNS lookup failures.

Figure 7 illustrates a sample infection that was

detected using the SRI honeynet, and the corresponding BotHunter

profile. W32/IRCBot-TO is a very recent (released January 19, 2007)

network worm/bot that propagates through open network shares and

affects both Windows 2000 and Windows XP systems [37].

The worm uses the IPC share to connect to the SRVSVC pipe and

leverages the MS06-40 exploit [27], which is a buffer

overflow that enables attackers to craft RPC requests that can execute

arbitrary code. This mechanism is used to force the victim to fetch

and execute a binary named netadp.exe from the system folder. The

infected system then connects to the z3net IRC network and joins two

channels upon which it is instructed to initiate scans of 203.0.0.0/8

network on several ports. Other bot families successfully detected

by BotHunter included variants of W32/Korgo, W32/Virut.A and W32/Padobot.

Overall, BotHunter detected a total of 1,920 of these 2,019 successful

bot infections. This represents a 95.1% true positive rate.

All malware instances observed during this period transmitted their

exploits through ports TCP-445 or TCP-139. This is very common

behavior, as the malware we observe tends to exploit the first

vulnerable port that replies to a targeted scans, and ports TCP-445

and TCP-139 are usually among the first ports tested. The infection

set analyzed exhibited limited diversity in the infection transmission

methods, and overall we observed roughly 40 unique patterns in the

dialog warnings produced.

This experiment produced 99 bot infections that did not produce bot

profiles, i.e., a 4.9% false negative rate.

To explain these occurrences we manually examined each bot

infection trace that eluded BotHunter, using tcpdump and ethereal. The

reasons for these failed bot detections can be classified into three primary

categories: infection failures, honeynet setup or policy failures, or

data corruption failures.

- Infection failures: We observed infections in which the

exploit apparently led to instability and eventual failure in the infected host.

More commonly, we observed cases in which the infected victim attempt to

"phone home," but the SYN request received no reply.

- Honeynet setup and policy failures: We observed that our

NAT mechanism did not correctly translate application-level address requests

(e.g., ftp PORT commands). This prevented several FTP egg download connection

requests from proceeding, which would have otherwise led to egg download

detections. In addition, some traces were incomplete due to errors in our

honeypot recycling logic which interfered with our observation of the infection

logic.

- Data corruption failures: Data corruption was the dominant

reason (86% of the failed traces) in preventing our BotHunter sensors from

producing dialog warnings. We are still investigating the cause behind these

corruptions, but suspect that these likely happened during log rotations by

the Drone manager.

Discussion: In addition to the above false negative experiences, we also recognize

that others reasons could prevent BotHunter from detecting infections.

A natural extension of our infection failures is for a bot to purposely lay

dormant once it has infected a host to avoid association of the

infection transmission with an outbound egg download or coordination

event. This strategy could be used successfully to circumvent

BotHunter deployed with our default fixed pruning interval. While we

found some infected victims failed to phone home, we could also

envision the egg download source eventually responding to these

requests after the BotHunter pruning interval, causing a similar missed

association. Sensor coverage is of course another fundamental concern

for any detection mechanism. Finally, while these results are highly

encouraging, the honeynet environment provided a low-diversity in bot

infections, in which attention was centered on direct exploits of

TCP-445 and TCP-139. We did not provide a diversity of honeypots with

various OSs, vulnerable services, or Trojan backdoors enabled, to

fully examine the behavioral complexities of bots or worms.

5.3 An Example Detection in a Live Deployment

In addition to our laboratory and honeynet experiences,

we have also fielded BotHunter to networks within the

Georgia Tech campus network and within an SRI laboratory

network. In the next sections we will discuss these deployments and

our efforts to evaluate the false positive performance of BotHunter. First,

we will briefly describe one example host infection that was detected

using BotHunter within our Georgia Tech campus network experiments.

In early February 2007, BotHunter detected a bot infection that produced

E1, E4 and E5 dialog warnings. Upon inspection of the bot profile, we

observed that the bot-infected machine was scanned, joined an IRC channel,

and began scanning other machines during the BotHunter time window. One

unusual element in this experience was the omission of the actual infection

transmission event (E2), which is observed with high-frequency in our

live honeynet testing environment. We assert that the bot profile

represents an actual infection because during our examination of this

infection report, we discovered that the target of the E4 (C&C Server) dialog

warning was an address that was blacklisted both by the ShadowServer and the

botnet mailing list as a known C&C server during the time of our bot profile.

5.4 Experiments in a University Campus Network

In this experiment, we evaluate the detection and false positive

performance of BotHunter in a production campus network (at the

College of Computing [CoC] at Georgia Tech). The time period of this evaluation

was between October 2006 and February 2007.

The monitored link exhibits typical diurnal behavior and a sustained

peak traffic of over 100 Mbps during the day. While we were concerned

that such traffic rates might overload typical NIDS rulesets and

real-time detection systems, our experience shows that it is possible

to run BotHunter live under such high traffic rates using commodity

PCs. Our BotHunter instance runs on a Linux server with an Intel Xeon

3.6 GHz CPU and 6 GB of memory. The system runs with

average CPU and memory utilization of 28% and 3%, respectively.

To evaluate the representativeness of this traffic, we randomly

sampled packets for analysis (about 40 minutes). The packets in our

sample, which were almost evenly distributed between TCP and UDP,

demonstrated wide diversity in protocols, including popular protocols

such as HTTP, SMTP, POP, FTP, SSH, DNS, and SNMP, and collaborative

applications such as IM (e.g., ICQ, AIM), P2P (e.g., Gnutella,

Edonkey, bittorrent), and IRC, which share similarities with infection

dialog (e.g., two-way communication). We believe the high volume

of background traffic, involving large numbers of hosts and a diverse

application mix, offers an appealing environment to confirm our detection

performance, and to examine the false positive question.

First, we evaluated the detection performance of BotHunter in the presence

of significant background traffic. We

injected bot traffic captured in the virtual network (from the

experiments described in Section 5.1) into

the captured Georgia Tech network traffic. Our motivation was to

simulate real network infections for which we have the ground truth

information. In these experiments, BotHunter correctly detected all 10

injected infections (by the 10 bots described in

Section 5.1).

Table 4: Raw Alerts of BotHunter in 4 Month Operation in CoC Network

| Event | E1 | E2[rb] | E2[sl] | E3 | E4 | E5 |

| Alert# | 550,373 | 950,112 | 316,467 | 1,013 | 697,374 | 48,063 |

Next, we conducted a longer-term (4 months) evaluation of false alarm

production. Table 4 summarizes the number of dialog

warnings generated by BotHunter for each event type from October 2006 to

January 2007. BotHunter sensors generated about 2,563,402 (more than

20,000 per day) raw dialog warnings from all the five event categories.

For example, many E3 dialog warnings report on Windows executable downloads,

which by themselves do not shed light on the presence of exploitable