Delegating Responsibility in Digital Systems:

|

Mark S. Miller Google Research |

James E. Donnelley NERSC, LBNL |

Alan H. Karp Hewlett-Packard Labs |

Programs do good things, but also do bad, making software security more than a fad. The authority of programs, we do need to tame. But bad things still happen. Who do we blame? From the very beginnings of access control: Should we be safe by construction, or should we patrol? Horton shows how, in an elegant way, we can simply do both, and so save the day. with apologies to Dr. Seuss |

For reactive controls to work well, we must first proactively limit attackers to causing only repairable damage. A simple example is a wiki that proactively grants untrusted users only read access to history, so it can risk granting them write access to pages. If a user posts abuse, damaged pages can be restored, and that user's access can be reactively suspended. We need both approaches, but the main access control paradigms each seem to support only one well. This paper contributes a new answer to this dilemma.

Access Control List (ACL) systems support reactive control directly. ACL systems presume a program acts on behalf of its “user”. Access is allowed or disallowed by checking whether this operation on this resource is permitted for this user. By tagging all actions with the identity of the user they allegedly serve, they can log who to blame, and whose access to suspend. But ACLs are weak at proactive control. Solitaire runs with all its user's privileges. If it runs amok, it could do its user great harm.

Capabilities seem to have opposite strengths and weaknesses. A capability—like an object-reference in a memory-safe language—is a communicable and unforgeable token used both to designate some object and to permit access to that object. Because the term “capabilities” has since been used for many other access control rules [11], we now refer to the original model [2] as object-capabilities or ocaps for short.

By allowing the controlled delegation of narrow authority, ocap systems support proactive control well. The invoker of an object normally passes as arguments just those objects (capabilities) that the receiver needs to carry out that request. A user can likewise delegate to an application just that portion of the user's authority the application needs [21], limiting damage should it be corrupted by a virus.

Because ocaps operate on an anonymous “bearer right” basis, they seem to make reactive control impossible. Indeed, although many historical criticisms of ocaps have since been refuted [11, 16, 10, 17], a remaining unrefuted criticism is that they cannot record who to blame for which action [6]. This lack has led some to forego the benefits of ocaps.

How can we support both forms of control well in one system? One approach combines elements of the two paradigms in one architecture [7, 4]. Another emulates some of the virtues of one paradigm as a pattern built on the other. For example, Polaris [19] uses lessons learned from ocaps to limit the authority of ACL-based applications on Windows, as Plash [15] does on Unix, without modifying either these applications or their underlying ACL OS.

In this paper, we show how to attribute actions in an ocap system. As a tribute to Dr. Seuss [5], we call our protocol Horton (Higher-Order Responsibility Tracking of Objects in Networks). Horton can be interposed between existing ocap-based application objects, without modifying either these objects or their underlying ocap foundations. Horton supports identity-based tracking and control for delegating responsibility with authority. Horton thereby refutes this criticism of the ocap paradigm.

We explain Horton with a scenario where object A executes b.foo(c), intending to send the “foo” message to object B with a reference to object C as an argument. If A had direct references to B and C, then B would receive a direct reference to C.

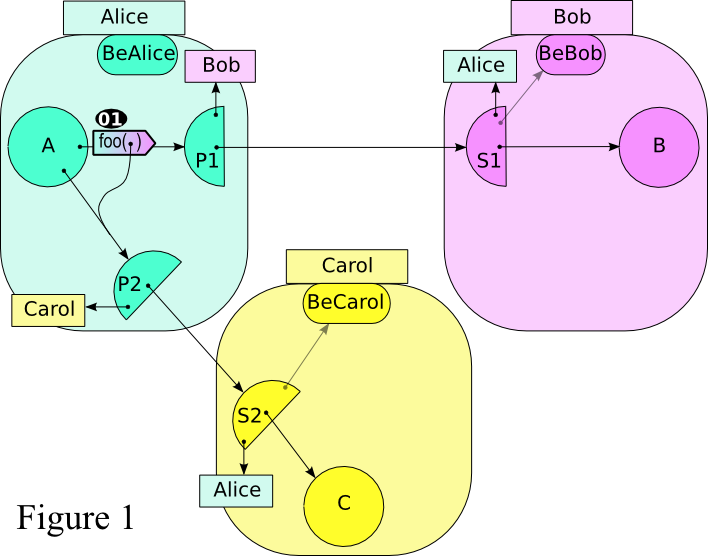

Imagine that A, B, and C are application-level objects contributed by mutually suspicious parties, Alice, Bob, and Carol, respectively. In order to attribute possible misbehavior and suspend access, Alice, Bob, and Carol interpose the intermediary objects shown in Figure 1: The outgoing half circles are proxies and incoming half circles are stubs. Under normal conditions, they wish their app-objects to proceed transparently, as if directly connected.

When A sends the “foo” message on the path to B, A actually sends

it to Alice's proxy P1 (Figure 1,  ). P1 logs the

outgoing message as a request that Bob is responsible for serving. P1 sends

an encoding of this message to Bob's stub S1, which logs that Alice is responsible

for making this request (Figure 2,

). P1 logs the

outgoing message as a request that Bob is responsible for serving. P1 sends

an encoding of this message to Bob's stub S1, which logs that Alice is responsible

for making this request (Figure 2,  ). S1 decodes

the encoded message into a “foo” message which it delivers to B

(Figure 3,

). S1 decodes

the encoded message into a “foo” message which it delivers to B

(Figure 3,  ). (Read the html online to see the figures “animate” by

clicking on them.)

). (Read the html online to see the figures “animate” by

clicking on them.)

If Alice decides she no longer wishes to use Bob's services, she shuts off her “Bob” proxies such as P1. If Bob decides he no longer wishes to accept Alice's requests, he shuts off his “Alice” stubs such as S1.

Every protocol which builds secure relationships must face two issues: 1) the base case, building an initial secure relationship between players not yet connected by this protocol, and 2) the inductive case, in which a new secure relationship is bootstrapped from earlier assumed-secure relationships.

This paper discusses the inductive case. Ocap systems create new relationships by passing arguments. We must show that the relationship represented by the new B→P3→S3→C path in Figure 3 attributes responsibility sensibly, assuming that the A→P1→S1→B and A→P2→S2→C paths already do. An example attack would be if Alice could fool Bob into blaming Carol for bad service provided by Alice. To avoid non-repudiation [1], we accept that Bob can log bad data fooling himself into blaming the wrong party.

What represents a responsible identity? Cryptographic protocols often represent identity as a key pair. For example, a public encryption key identifies whoever knows the corresponding private decryption key. Put another way, knowledge of a decryption key provides the ability to be (or speak for [9]) the entity identified by the corresponding encryption key.

In ocap systems, the sealer/unsealer pattern [12] provides a similar logic. Rectangles such as the one labeled “Alice” represent Who objects, providing a seal(contents) operation, returning an opaque box encapsulating the contents. All such rectangles with the same label are references to the same Who object. The corresponding BeAlice object provides the authority to be the entity identified by Alice's Who object. BeAlice provides an unseal(box) operation that returns the contents of a box sealed by Alice's Who. The large rounded rectangles and colors aggregate all objects we assume to behave according to the intentions of a given Who.

Complete Horton implementations in Java and E are available at erights.org/download/horton/. For expository purposes, the E code after Figure 3 shows just the Horton code needed for the illustrated case. The line numbers on the code show the order of execution taken by our example. The code expresses just the minimal default behavior for participating in the Horton protocol. The LA, LB, and LC lines mark opportune places for each to insert identity-based control hooks. Mostly, this simplified code uses just the simple sequential five-minute subset of E explained in [10, Ch6: A Taste of E].

When the foo message arrives at proxy P1, it does not match any of the proxy's method definitions, so it falls through to the match clause (02), which receives messages reflectively. The clause's head is a pattern matched against a pair of the message name (here, "foo") and the list of arguments (here, a list holding only proxy P2).

P1 asks for the value of P2's stub and whoBlame fields, which hold S2 and Carol's Who (03–05). (To protect against misbehaving app-objects, P1 actually does this by rights amplification [12] rather than the getGuts message shown here.) P1 then sends intro(whoBob) to S2 (06), by which Alice is saying in effect “Carol, I'd like to share with Bob my access to C. Could you create a stub for Bob's use?” Nothing forces Alice to share her rights in this indirect way; Alice's P1 could just give Bob direct access to S2. But then Carol would necessarily blame Alice for Bob's use of S2, which Alice might not like.

Carol makes S3 for Bob's use of C (08). Carol tags S3 with Bob's Who, so Carol can blame Bob for messages sent to S3. Carol then “gift wraps” S3 for Bob and returns gs3, the gift-wrapped S3, to Alice as the result of the intro message (09–11). Alice includes gs3 in the p3Desc record encoding the P2 argument of the original message (12). By including this in the deliver request to Bob's S1 (13), Alice is saying in effect “Bob, please unwrap this to get the ability to use a service provided by Carol.”

Bob's S1 unpacks the record (15), unwraps gs3 to get S3 (16–26), which it uses to make proxy P3 (27). Bob tags P3 with Carol's Who, so Bob can blame Carol for the behavior of S3. S1 then includes P3 as the argument of the app-level foo message it sends to B using E's reflective E.call primitive (28).

Clearly the unwrap function must be the inverse of the wrap function. Identity functions would be simple, but would also give Alice's P1 access to S3. Since P1 behaves as Alice wishes, P1's access to S3 would let Alice fool Carol into blaming Bob for messages Alice sends to S3.

Carol's S2 should at least gift-wrap S3 so only Bob can unwrap it. Could we simply use the seal/unseal operations of Bob's who/be pair as the wrap/unwrap functions? Unfortunately, this would still enable Alice to give Bob a gift allegedly from Carol, but which Bob unwraps to obtain a faux S3 created by Alice.

In our solution, Carol's wrap creates a provide function, seals it so only Bob can unseal it, and returns the resulting box as the wrapped gift (11). Bob's unwrap unseals it to get a provide function allegedly from Carol (18). Bob will need to call provide (21) so that only Carol can provide S3 to him. Bob declares an assignable result variable (19), and a fill function for Carol to call to set this variable to S3. He seals this in a box only Carol can unseal (20) and passes this to provide (21). Carol's provide unseals it to get Bob's fill function (23), which Carol can call to set the result to S3 (24–25). After Carol's provide returns, Bob's unwrap returns whatever it finds in the result variable (26).

Should Bob and Carol ever come to know that the other is independent of Alice, they can then blame each other, rather than Alice, for actions logged by P3 and S3. Say C is a wiki page. If Carol believes that Bob is not a pseudonym for Alice, and Carol decides that Bob has abused this page, Carol should then revoke Bob's access without revoking Alice's access by shutting off her “Bob” stubs such as S3. If Bob decides that C is flaky, he can stop using Carol's services by shutting off his “Carol” proxies such as P3. This completes the induction.

01: # A says:... b.foo(c) ... def makeProxy(whoBlame, stub) { return def proxy { 04: to getGuts() { # as P2 05: return [stub, whoBlame]} 02: match [verb, [p2]] { # as P1 03: def [s2, whoCarol] := p2.getGuts() 06: def gs3 := s2.intro(whoBlame) 12: def p3Desc := [gs3, whoCarol] LA: #...check and log service... 13: stub.deliver(verb, [p3Desc])}}} def makeStub(beMe, whoBlame, targ) { return def stub { 07: to intro(whoBob) { # as S2 08: def s3 := makeStub(beMe,whoBob,targ) LC: #...check and log intro... 09: return wrap(s3, whoBob, beMe)} 14: to deliver(verb, [p3Desc]) { # as S1 15: def [gs3, whoCarol] := p3Desc 16: def s3 := unwrap(gs3, whoCarol, beMe) 27: def p3 := makeProxy(whoCarol, s3) LB: #...check and log request... 28: E.call(targ, verb, [p3])}}} 29: # B implements:... to foo(c) {...} ... 10: def wrap(s3, whoBob, beCarol) { # as S2 22: def provide(fillBox) { 23: def fill := beCarol.unseal(fillBox) 24: fill(s3)} 11: return whoBob.seal(provide)} 17: def unwrap(gs3,whoCarol,beBob){ # as S1 18: def provide := beBob.unseal(gs3) 19: var result := null 25: def fill(s3) {result := s3} 20: def fillBox := whoCarol.seal(fill) 21: provide(fillBox) 26: return result}

Reactive security ocap patterns include the logging forwarder [18] and the caretaker [13]. Horton's main contribution is the inductive formation of these patterns among mutually suspicious parties.

Petmail [22] and SPKI [4] provide some Horton-like features in non-ocap systems. They show how Carol need not be involved during the delegation of C from Alice to Bob. Future work should try to express this in terms of ocaps, without explicit cryptopgraphy.

We thank the cap-talk community, especially Peter Amstutz, David Chizmadia, Tyler Close, Bill Frantz, David Hopwood, Terence Kelly, Charles Landau, Sandro Magi, Rob Meijer, Chip Morningstar, Toby Murray, Kevin Reid, Jonathan Shapiro, Terry Stanley, Marc Stiegler, Pierre Thierry, Brian Warner, and Meng Weng Wong.

This document was translated from LATEX by HEVEA.